Raspberry Pi Security Robot

Controlled by the Raspberry Pi, our security robot attempts to center itself with a green target and is continuously scanning the area in front of it for authorized persons or intruders. To center the target, the robot will move to attempt to find the target and then center itself when the target is somewhere in front of it. This image processing is accomplished using OpenCV. For facial recognition, we utilized the dlib python API to quickly implement a facial recognition system within our security robot. Finally, motor control and gimbal movement is controlled with software and hardware PWM respectively, whose driving logic is controlled via software.

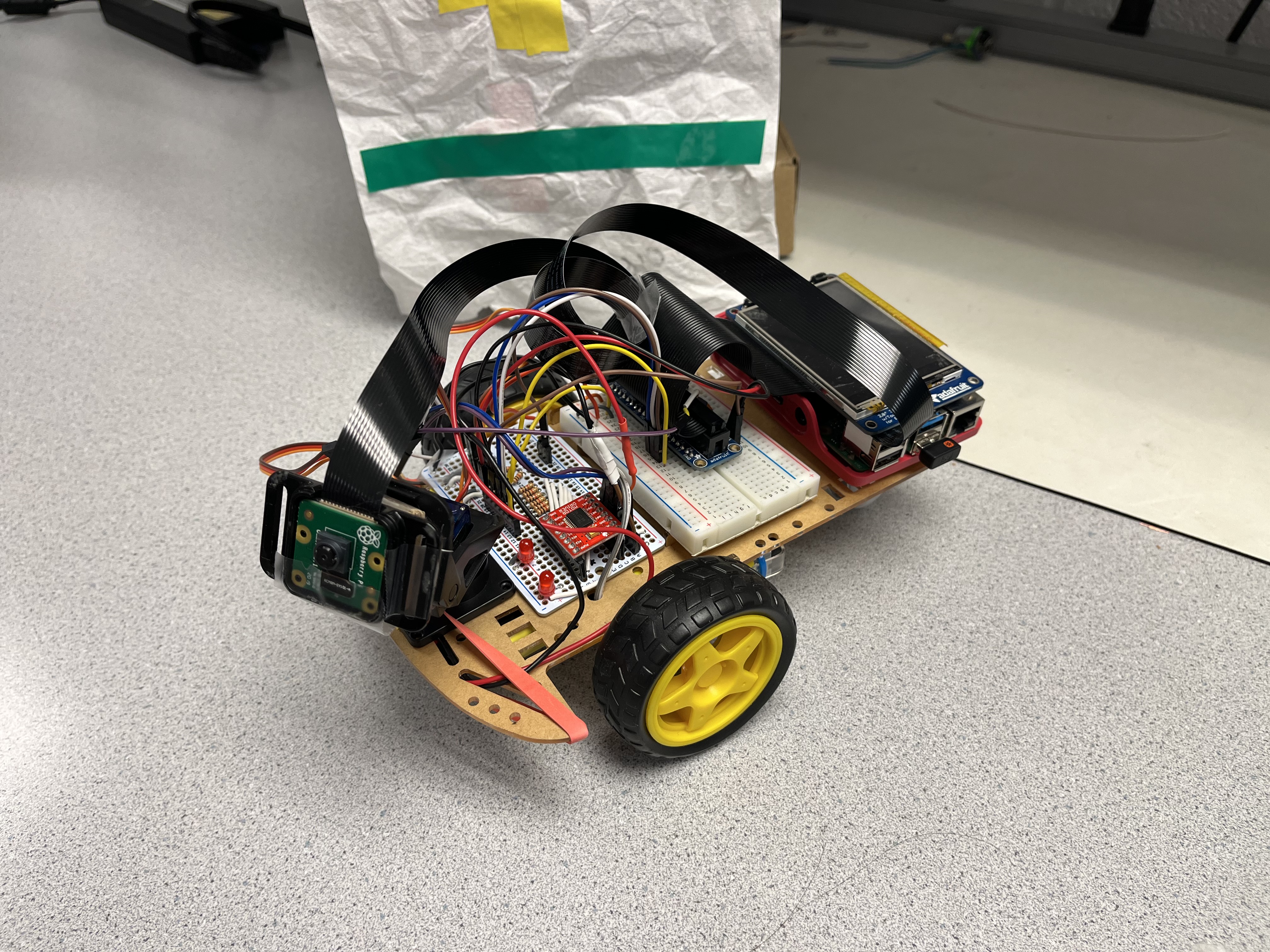

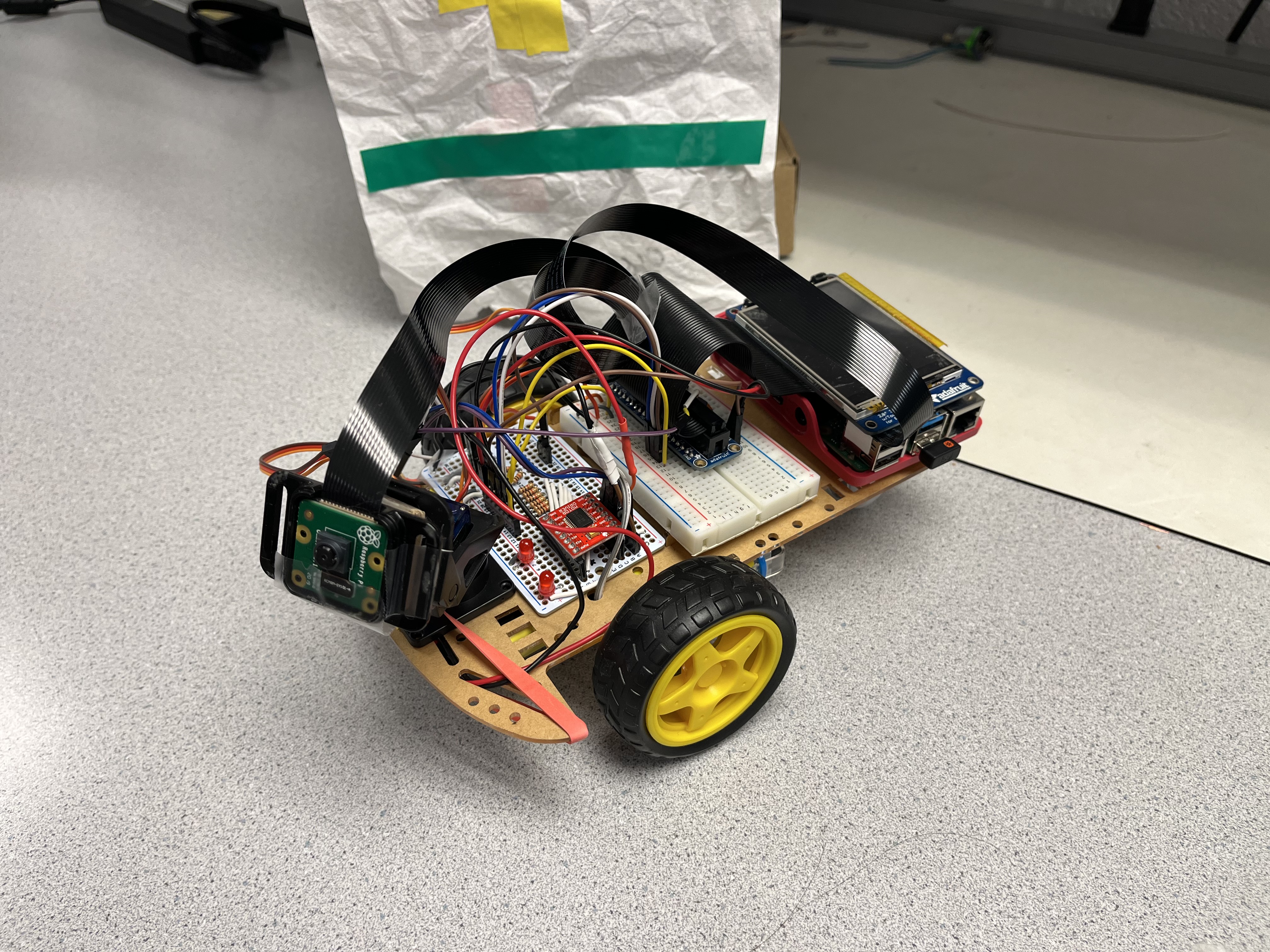

Our project was split into several parts to make design, testing, and implementing each function easier. To start, we separated our robot’s operation into these tasks: Camera, PyGame Interface, Facial Detection and Recognition, Target/Color Detection, Gimbal Control, and Motor Control. After testing these separately, we integrated it into our system, which culminated in our finished product of a security robot. Our robot will output at most three of the identified faces on screen and label their names. If any of the faces are unrecognized, a warning message will display on the PiTFT screen, signaling that an unknown and possibly malicious person is in the area. The final product has deviated from our original design, where the robot will patrol a set path and perform all the functions described previously. Rather than patrol, the robot will rotate around a point and search for a target to face towards. In the figure below, diagrams and functions within our robot are labeled.

The Rasperry Pi Camera v2 is wired to the Pi's dedicated camera port with a 2 foot ribbon cable and is attached to the camera gimbal. The camera gimbal is set up using the instructions from Adafruit [13]. For easy assembly and disassembly, the gimbal is attached by velcro and its position was minimally affected during operation.

The motor driver and all motors were mounted onto the protoboard to lighten the assembly and make the circuit more compact. This also helped center the distribution of mass, which is essential for ensuring our robot will as intended. Circuitry followed design practices introduced in Lab 3, such as isolating the Motor and the Raspberry Pi Power Supplies and protecting Pi GPIO pins. All motors, 2 DC motors and 2 SG90 servo motors, are powered by a 6V AA battery power supply. The DC motors are driven by a Sparkfun TB6612FNG Dual-Channel Motor Controller while the SG90s are driven directly by the Pi using hardware PWM.

To use the camera with OpenCV and dlib, we had to change the camera settings of the pi to the legacy stack [7]. There are other options than OpenCV, but most of them also use the legacy stack. Future development may not require this. To use the camera, we followed the Canvas tutorial “ Pi Camera and OpenCV Guidelines “ to learn how to access the camera. The camera was accessed by accessing the camera with:

video_cap = cv2.VideoCapture(0)

0 is the video device that the camera is usually listed as. Going to your /bin folder may reveal multiple video devices and we found that if ‘0’ did not work there was an error in opening the camera. Possible solutions are described below. To read the camera stream we used return_val, frame = video_cap.read() to get a frame and then followed it with cv2.imshow(‘frame’,frame) and key = cv2.waitKey(1) to display the frame. The waitKey() command is required to show the frame and the 1 is a 1 millisecond delay.

The PyGame Interface is quite simple, which begins with a title screen that has a START and QUIT button. This prevents the robot from beginning operation when the script is run. When the START button is pressed, the robot begins operation. If the QUIT button is pressed, the program is closed.

To achieve our goal of facial detection and recognition, we used the dlib machine learning API. The API includes a facial detection model, facial landmark model, and facial recognition tool. Downloading dlib on the pi was fairly simple, which only required that you have CMake installed and are careful with being consistent about what python version you are using. We would recommend using the python3 pip install dlib command to ensure the correct version of dlib is installed. So python2 calls will not work for python3 dlib scripts. Furthermore, certain versions of python 3.x are specific to their own dlib version, so being cognizant of that helped keep our code from having errors due to version inconsistencies. Starting with dlib’s facial detection model, the one used in our project is made by using the “Histogram of Oriented Gradients (HOG) feature combined with a linear classifier, an image pyramid, and sliding window detection scheme” [1a]. This model is fairly accurate, except it does struggle with determining faces of darker complexion in certain lighting conditions. This is seen in our demo, and there is an example where the facial recognition works better. To recognize a face, we needed to use dlib’s shape predictor “shape_predictor_5_face_landmarks.dat”, to draw a 5 point landmark on any detected face. The landmark shape is shown below:

With this, the facial recognition tool, dlib_face_recognition_resnet_model_v1.dat takes the image and facial landmarks as input and then creates a 128-D “encoding” or maps the input to 128-D vector space, which we can process for facial recognition. From the facial recognition example, the author’s state that “you can perform face recognition by mapping faces to the 128D space and then checking if their Euclidean distance is small enough” [1b]. The author’s state that “a distance threshold of 0.6 leads to a 99.38% accuracy on the standard LFW face recognition benchmark”, which makes this model reasonable for our use. To calculate the distance between vectors, we cast the 128D image encodings to numpy arrays and calculated the distance using:

Distance = np.linalg.norm( face_encoding_being_compared - face_encoding_to_compare_to )

Before the operation loop is entered, the program will process a list of authorized users and create encodings of their faces. These faces are used on the in-operation detected faces to determine if they are ‘authorized’ or ‘unauthorized’ people in the vicinity. For now, the PyGame interface will draw bounding boxes colored RED or GREEN around detected faces and show a big “INTRUDER ALERT” warning if an unknown face is identified.

To detect a certain color, we used the OpenCV library to accomplish this task. OpenCV can be installed most directly by using the command sudo apt-get install python3-opencv. Our Object detection can be broken down into four steps:

Take a snapshot of the area in front of the robot using the RPi Camera. This image is taken at a 640x480p resolution and is upside down due to how we mounted the camera. The color scheme used is BGR, so the image would have dimensions of 640x480x3, where the last dimension is the channel for color.

Orient the frame correctly (180 degree rotation) and convert to HSV (Hue, Saturation, Value) color scheme by using cv2.cvtColor(frame, cv2.COLOR_BGR2HSV) [3]. This color scheme makes color detection easier because the channel parameters are easier to adjust for thresholding the image.

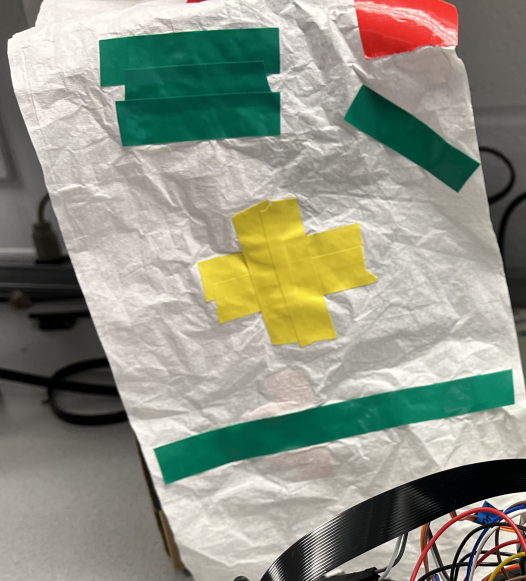

Create a binary mask for the image by thresholding based on our threshold values by using the cv2.inrange() function [3]. We chose to threshold on the color green, as it is quite easy to “see” in our testing space. The lower threshold value is set to be (77, 78, 56.25) and the upper threshold values set to (104, 255, 255). The hue majorly controls what “color” we desire. The saturation controls “Brightness”, where lower values make the color lighten. The Value controls the “Darkness”, where lower values make the color darken. These are intuitions based on what we saw when determining the threshold values.

Set a left and right boundary of 3 pixel thickness and separation distance of 20% of the screen width (64 pixels). The color is detected on the right or left when a ‘1’ from the mask is in the boundary. We say that the target color is in front of the robot if both left and right boundaries detect the color.

The masking and boundary detection can be shown in the video below:

To scan the area with the camera, we used a camera gimbal with horizontal and vertical servos. We used hardware PWM, which can be accessed from GPIO pins 12 and 13 [4]. To use the hardware PWM, sudo pigpiod must be executed. This is the Pi GPIO daemon. The python script must then be connected to the Pi GPIO daemon with pi_hw = pigpio.pi() and the pwm can be set using pi_hw.hardware_PWM( pin, frequency, duty_cycle) [5]. The duty cycle is the decimal value multiplied by $10^6$. So,duty_cycle = 500000 would set the duty cycle to 50%. The servo motors' angle can be changed by altering the duty cycle between 1-2ms at a 50 Hz period [6]. To scan through a 180 degree space, we used the formula duty_cycle $= -( \frac{0.0625}{180} ) * (angle + 90) + 0.125$. This formula approximately achieves 180 degree motion. To control the vertical servo, we set the angle at around 40 degrees to capture faces while on the lab table. To control the horizontal servo, we varied the pwm duty cycle as the program ran. Our logic was to increment the angle a degree every loop iteration. Due to physical characteristics, we chose the angle range [0,200] because 180 degrees was not enough rotation. The image processing took just long enough to sweep the area in front of it at a reasonable speed.

To control the motors, we used the motor driver previously mentioned and used in Lab 3 of the class. While the camera sweeped left and right, the script will perform color detection on angles in the range [95,105], which is approximately the center of the robot. After scanning this range, the robot will turn right if it detects the color on the right boundary and not the left. If the target color is detected on the left and right boundary, the robot will not move as it is facing the ‘target’. The robot will default to moving left. Each movement only occurs for 10 frames to avoid over rotation.

To start, the camera software used in this project uses the legacy camera stack. From the documentation, “Raspberry Pi OS images from Bullseye onwards will contain only the libcamera-based stack”. OpenCV uses the legacy camera stack and was the simplest solution to incorporating the camera into our project. We used the non-legacy libcamera stack to test the camera and the legacy stack to implement OpenCV.

Installing dlib on the rpi was fairly straightforward. Before installation, we had to install CMake using pip install cmake and then download the dlib package using python3 pip install dlib. These instructions differ slightly from the github repository instructions to use pip install dlib [1e]. In order to use dlib, we had to make sure that our version of python matched the corresponding dlib version. This was as simple as ensuring we ran each script with python3 and not python or python2.

Working with dlib can be a bit confusing. For the most part, the examples on the project website are pretty descriptive and understanding how to utilize the API was straightforward. However, all datatypes are API specific, but it is important to note that the vector class can be made into a numpy array using np.array(dlib_vector) and this is how we were able to calculate the distance between points to encoded images.

When working with the servo motors, we had misunderstood some of the documentation as the adafruit website describes how to control the servo angle in terms of pulse width in milliseconds. We misunderstood this as period, given the units were in time and so we calculated it as a frequency. Since, the pulse width range is between 1-2 ms pulses, we ended up using 500-1000 Hz frequency with 50 percent duty cycle for our servo motors. This led to the destruction of our servos and we had concluded that we were operating them wrong. To fix this, we converted the pulse width to duty cycle percentage so our frequency of pwm would be 50 Hz and duty cycle would be in the range of [0.05, 0.10]. Experimentation revealed this didn’t cover the entire range of rotation, so we had to use numbers slightly higher in the upper bounds.

When testing the camera with OpenCV and PyGame, multiple issues arose. For our program, it appeared that the frames red from our camera were rotated 90 degrees from standard orientation. Because OpenCV uses BGR color channels, the red and blue colors are swapped in pygame. These two issues were easily resolved by converting the OpenCV frame to RGB for pygame and rotating the pygame surface. During development, the pygame interface displayed weird behavior where the R and B color channels were clearly changing throughout the image. The figure below is a sketch of what was happening.

| Functions | Face in Frame | No Face in Frame |

|---|---|---|

| OpenCV | 30 fps | 30 fps |

| Pygame and OpenCV | 30 fps | 30 fps |

| Pygame, OpenCV, Facial Recognition (OpenCV resize) | 10-12 fps | 6 fps |

| Pygame, Camera, Facial Recognition (frame resizing) | 20-22 fps | 6-8 fps |

| PyGame, Camera, Facial Recognition, Hardware (frame resizing) | 20-22 fps | 6 fps |

We noted that resizing the OpenCV frame resulted in a massive drop in performance (66 %). This was unacceptable as we later tested it in our full system and it took too long to complete one sweep. By using the PyGame scaling function, we halved our performance drop and we found the speed of the gimbal due to this drop acceptable. The camera could sweep around in a reasonable time.

Another issue we encountered was operating the servo motors with software PWM. We first used software PWM for the horizontal servo to sweep the area in front of it. Our issue here was that the servo would stutter and did not sweep the area smoothly. To fix this, Professor Skovira suggested we use hardware PWM. This fixed the issue and was smoother than we had expected. When controlling the vertical servo, we found that software PWM was sufficient to hold it at an angle. However, when implemented into the full system, the vertical gimbal would jitter after performing the color detection. We aren’t sure what may have caused this, but it is most likely due to the small duty cycle range that the servo is controlled by. A video of the gimbal jitter is below:

The software PWM may not be as reliable when there is lots of code being executed. To fix this, we used hardware PWM to solve the issue and the vertical movement of the camera gimbal was constant through operation. We could have unplugged it, but that would give us no control over it.

Finally, we attempted to create a different facial recognition model to speed up our facial recognition process. Our facial recognition system has to identify faces in the frame, then predict the facial landmarks, then compute the 128D encoding of the image, and finally compare that encoding to known images. For our system we only had 2 authorized faces and adding more would increase the computation time significantly. To attempt to fix this, we tried to make a KNN model that took these encodings as input and then output the confidences for each class ( Authorized person ) [11] [12]. This would allow us to scale the robot to handle many authorized users as it won’t be iterating over a list of authorized users. However, our KNN model only outputs with high confidence of 1 for any class and proved to be unusable. We discuss alternatives to this in future work.

Initially, we had planned to make a security robot that patrolled a set path. This would be accomplished through marked targets and the robot would move toward each in order either forwards or backwards. Due to time constraints, we were only able to get the target detection, through color detection. However, since we can detect when the target is in front of the robot, we would only need to implement a little logic to move the robot forward and calculate when it is close enough to the target. Overall, our project was broken up into the sections described above and we designed and tested each section separately before integrating it into our system. Our design process completed these sections in order: Camera, PyGame Interface, Facial Recognition, Color Detection, Gimbal Control, Motor Control.

Overall, we met most of our goals outlined in the description. There is much room for improvement though, as darker complexion skin tones seem to be less detected and the detection model needs more training on diverse data. Additionally, we can increase the security aspects by having the robot patrol areas to increase its range of detection and possibly be used in more environments. We learned quite a bit about making sure our hardware connections are correct and datasheets are read thoroughly to avoid breaking any parts. We also learned more about how to choose machine learning models and ethical issues surrounding our system. For example, we have to ensure that our robot is not storing personal information while under operation and is not discriminatory.

Overall, we were able to make an embedded system that was able to identify authorized users and intruders when in frame. We were also able to correctly find a target and keep it in the center of the robot. To fit the time constraints of the class, we had to simplify multiple aspects of our project, such as the facial recognition process and navigation.

Through development, we discovered that two scripts or processes cannot open the camera at the same time. An error is thrown when attempting to do this. The first script to open the camera is not kicked off and remains running.

We had also attempted to split the scripts between facial recognition and object detection to control the motors. This ultimately failed due to the reason above. We also attempted to take a picture from one and save it to a file, which the other script would then read and process. This also did not work because both scripts eventually tried to access the same file and would cause one of the programs to terminate. These are kind of intuitive and make sense that they would fail, but we were hoping that our intuition might not have been true.

We also learned that software PWM is unacceptable for the servo motors. This is most likely because the duty cycle is so small and that software pwm is not precise enough to keep the servos consistently operating.

For future work, we would definitely want to implement our own facial recognition model to decrease the time of the facial recognition process. Framerate is an important metric to measure our robot’s performance and its functionality is bottle-necked by the facial recognition. Speeding this up would be one of our main goals. To do this, we thought using a CNN to process the area of a face would be a good avenue to head down. CNN is commonly used in image processing, and if we did this we would most likely have no need for the shape prediction and facial recognition model from dlib. We also would work on the robot navigating on the floor to patrol a somewhat set path. This would be done by placing easily identified labeled markers and the robot would pursue them until it got close enough. We thought about using ideas like pure pursuit, but our method of color detection can also work to keep the target centered. This would allow our robot to traverse greater distances and ensure security in a larger area. Experimentation with remote access was attempted, where we connect to the rpi camera. As mentioned earlier, we cannot have two processes opening a single camera, so this application would not work. However, we would want to explore ways to stream the PyGame video so that we could have a security guard or similar person monitoring the footage to respond to any threats that are detected.

raspivid.Rpi Camera V2 ($\$29.95$)

Camera gimbal ($ \$18.95$)

Protoboard, Red LEDs, Resistors (Lab Provided)

Total price = $\$48.90$

dlib Python Examples (For python examples to use)[1]

Autonomous Trakcing Robot (Balloon Bot)[2]

OpenCV Changing Color Spaces [3]

Micro Servo [5]

Hardware PWM [6]

Raspberry Pi Camera Documentation [7]

Distance Formula [8]

Pygame.transform reference [9]

Pi Camera Stream [10]

Sklearn Model [11]

Assembling the camera gimbal [13]

Canvas OpenCV tutorial [15]

Greg

Akugbe

Thank You Professor Skovira for your guidance throughout the semester, whether its with OpenCV or Lab Parts.

Thank you to Autonomous Trakcing Robot (Balloon Bot) for the dlib reference, along with modeling milestones for our project.