Driver Monitor

Yu Zhang (yz2729) and Sirena Depue (sgd63)

2021-12-15

Demonstration Video

Introduction

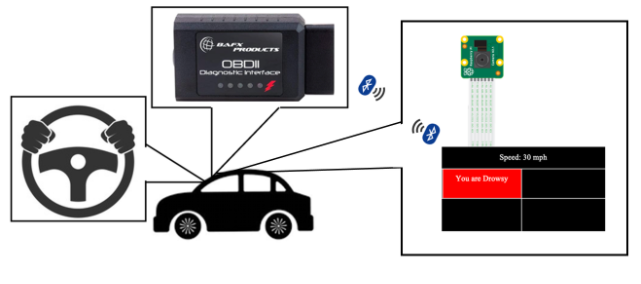

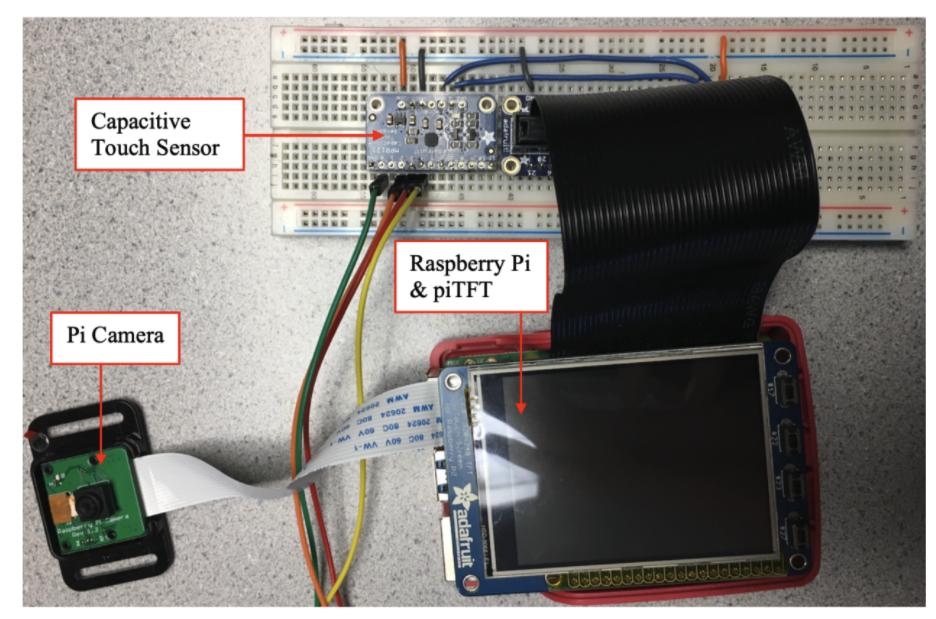

The purpose of this project is to create a self-contained system that monitors and alerts the driver when distracted. There are several categories within ‘distracted driving’ that we considered. Using OpenCV and DLib to detect a face and extract facial features from picamera footage, we can detect whether the driver is drowsy, is facing away from the road, or is looking away from the road. In addition, a capacitive touch sensor is used to detect whether the driver’s hands are on the steering wheel. An OBDII scanner is used to log the speed of the vehicle in real-time. To avoid unnecessary alarms, the alarms are paused when the car is safely stopped.

Additionally, there are time thresholds that must be exceeded before an alarm goes off. The triggering of the alarms is also reflected in a basic piTFT display. The following report outlines the implementation and testing of each feature and suggested future improvements.

Project Objective:

- Driver monitoring, including facing away detection, drowsiness detection, eye tracking and steering wheel detection

- Connecting to the car and monitors vehicle speed in real time

- Warning drivers in the interface after events recognition

Design & Testing

- Drowsiness Detection

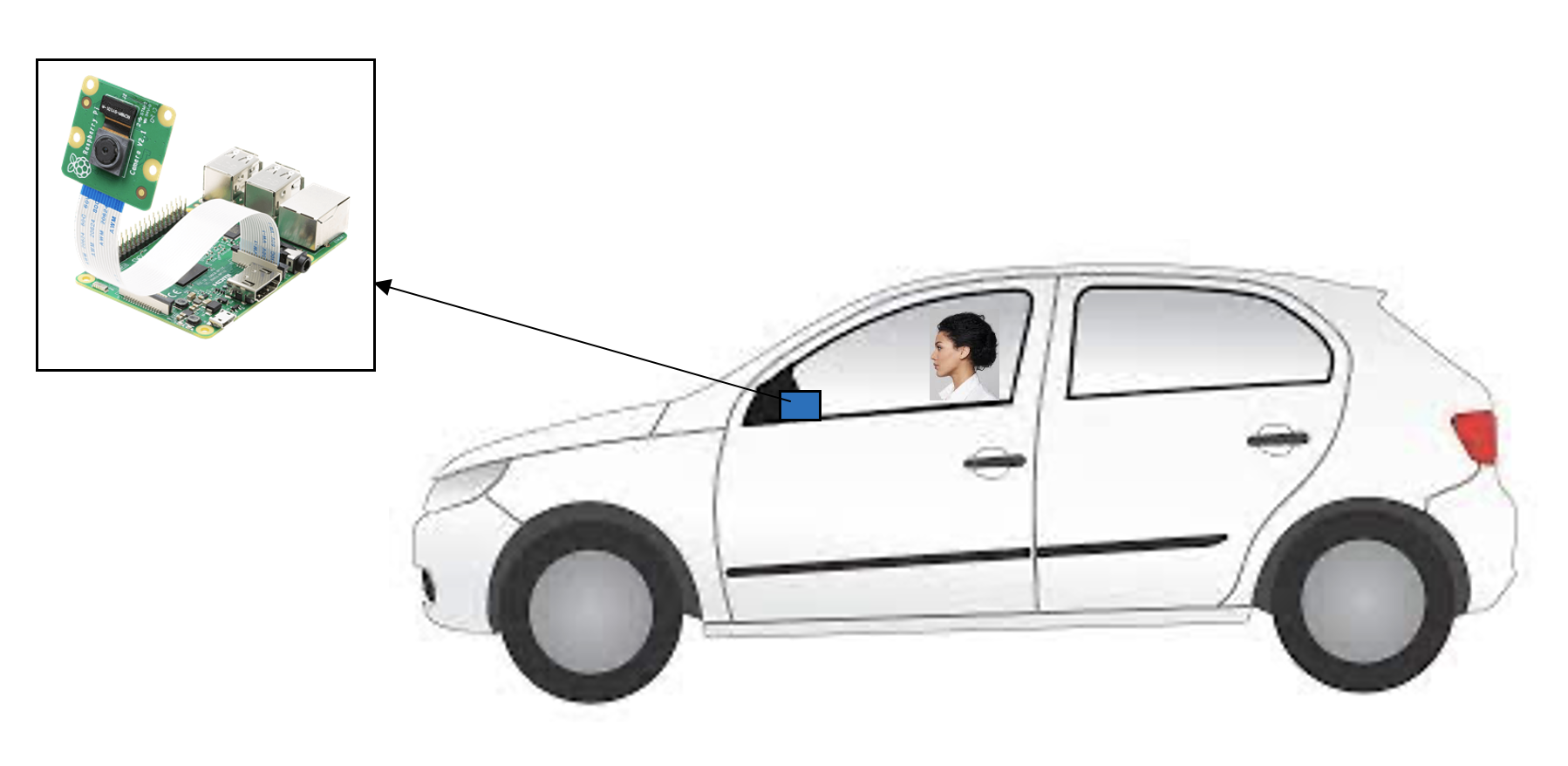

The drowsiness detection feature requires the use of a Pi camera. At the suggestion of a previous team, we installed OpenCV using this tutorial. After installation (~1 hour), it was imported via “import cv2”. DLib was simply installed using “pip install dlib”.

The picamera was set up using the following commands:

cap =cv2.VideoCapture(0)cap.set(cv2.CAP_PROP_FOURCC,cv2.VideoWriter_fourcc('M','J','P','G'))Following this, we call dlib to initialize the face detection and facial landmark prediction

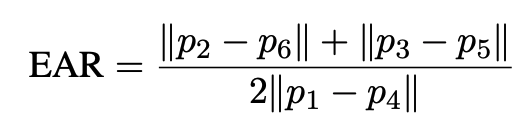

detector = dlib.get_frontal_face_detector()predictor = dlib.shape_predictor(shape_predictor_path)We use the Eye Aspect Ratio (EAR) algorithm, which was introduced by Soukupová and Čech’s in their 2017 paper[1], to determine whether the driver is drowsy.

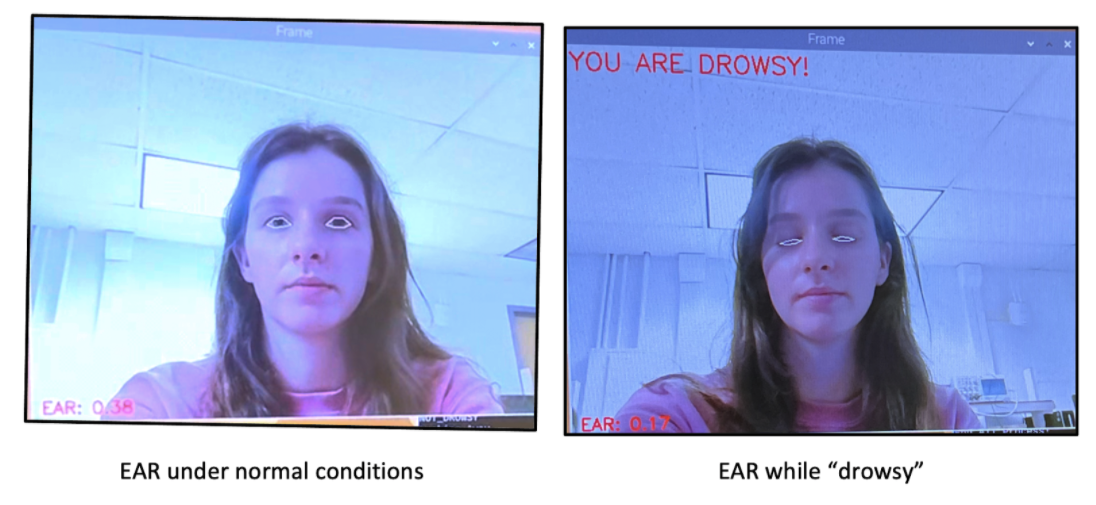

The EAR value can be calculated using the following formula. When the eyes are fully open, the EAR value should be larger than a pre-defined threshold. And when the eyes are closed, the EAR value decreases and tends to zero, which means that it must be less than our pre-defined threshold.

The test result of our program is shown in the figure below. The image on the left shows the EAR value under normal conditions, and the image on the right shows the EAR value when the eyes are closed.

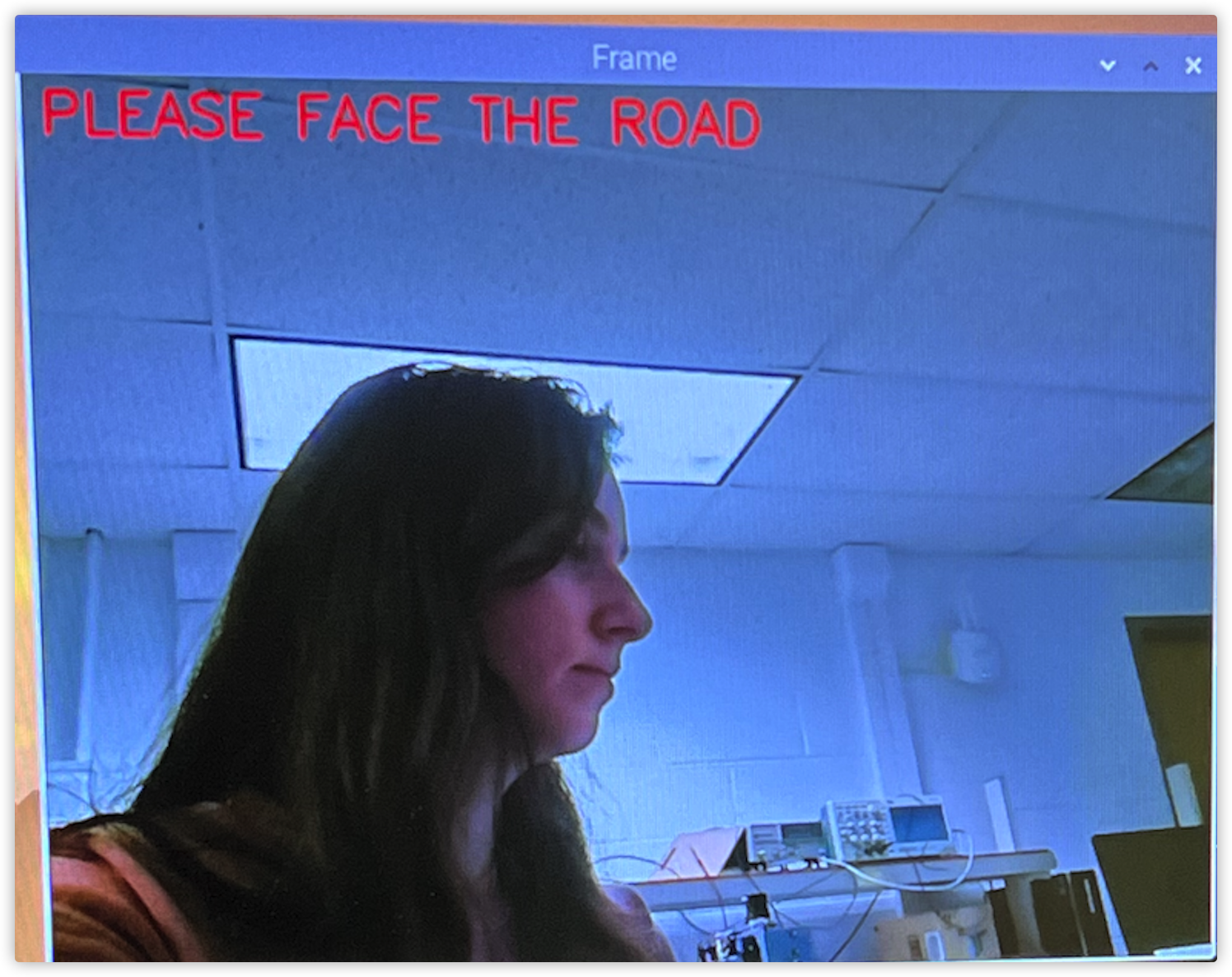

Drowsiness Detection - Facing away Detection

An extension of this feature is to consider when the driver is facing away from the camera. When this occurs, dlib can no longer detect a face and facial features cannot be computed. If the numpy array returned is empty, we know that a face is not detected, and we assume the driver is facing away from the road and is therefore distracted. When this occurs, an alarm is sounded. One additional feature is in the case of the driver facing away while a drowsiness or looking away alarm is still triggered. If this occurs, the other alarms are disabled because it can no longer detect your face and determine if the driver is indeed drowsy or looking away.

Facing away Detection - Eye Tracking

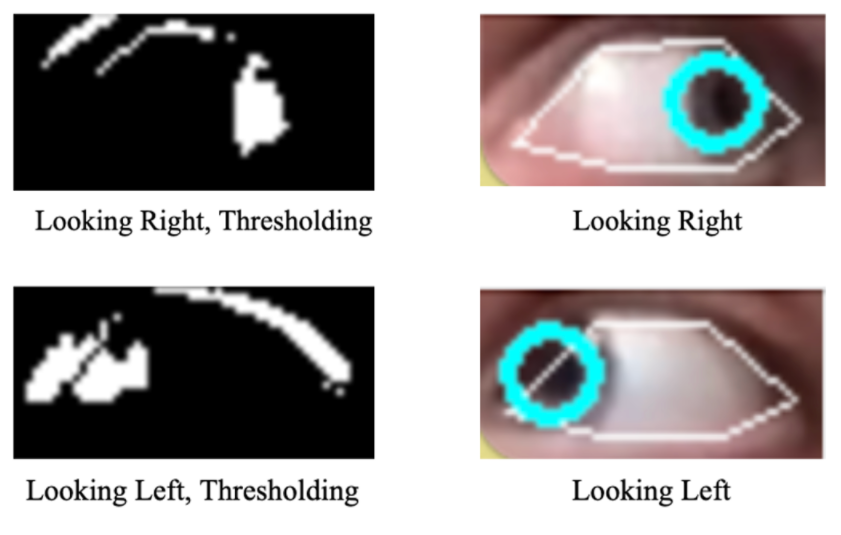

Where a face can still be detected when a person angles their head away from the camera, eye tracking is also implemented to check whether their eyes are focused on the road. In implementing the drowsiness detection, the recognition and extraction of facial features was already initialized. A function was added to return the coordinates of the outer corner (x1), inner corner (x2), bottom (y1), and top (y2) of the left eye. Since humans cannot move their eyes independently of each other, we only considered the left eye to reduce image processing. The function is called and the returned values are used to crop each frame of the video. This was initially not working, as we were switching the x1 and x2 values for cropping. It was not until showing the cropped frame via

cv2.imshow, that this was resolved. Following this issue, the rest of the code worked well.Next, the pupil needs to be delineated from the whites of the eyes. To achieve this, each frame is prepared using the function

cv2.bitwise_notto invert the image andcv2.cvtColorto convert it to grayscale. Then, we use thresholding to segment the image into the foreground and background (pupil and whites of eye) via some threshold value, T. Where the lighting conditions vary, a constant threshold value will not predictably segment the image. Instead, we use adaptive thresholding viacv2.adaptiveThreshold, which automatically finds the optimal threshold value T(x,y) from the mean of the 𝚋𝚕𝚘𝚌𝚔𝚂𝚒𝚣𝚎×𝚋𝚕𝚘𝚌𝚔𝚂𝚒𝚣𝚎 neighborhood of (x,y) - C, according to the documentation[2]. Now we can find the contours of the pupil in each frame usingcv2.findContours(), which as its name would suggest, extracts the contours from an image. We find the center coordinates of the pupil viacv2.moments(), and compare this value to the center of the left eye. If |xcenter of eye - xcenter of pupil| > 8, then the person is looking away. The direction can be distinguished by considering the signs, but this was not necessary for this project. Snapshots are shown below with a circle drawn on the detected pupil and with the thresholding applied. Although not perfect, the adaptive thresholding is able to find the pupil when looking left and right, enough so that the contours can be found.A website was loosely referenced to understand the basic steps used in eye tracking; namely thresholding and finding the contours. Beyond these necessary steps, the implementation was different; we were able to use our initial drowsiness code to “prepare” each frame differently (cropping rather than creating a mask), we chose to use adaptive thresholding for better, real-time results, and we used a different equation/metric for determining whether the person was looking away.

Eye Tracking - Steering Wheel Detection

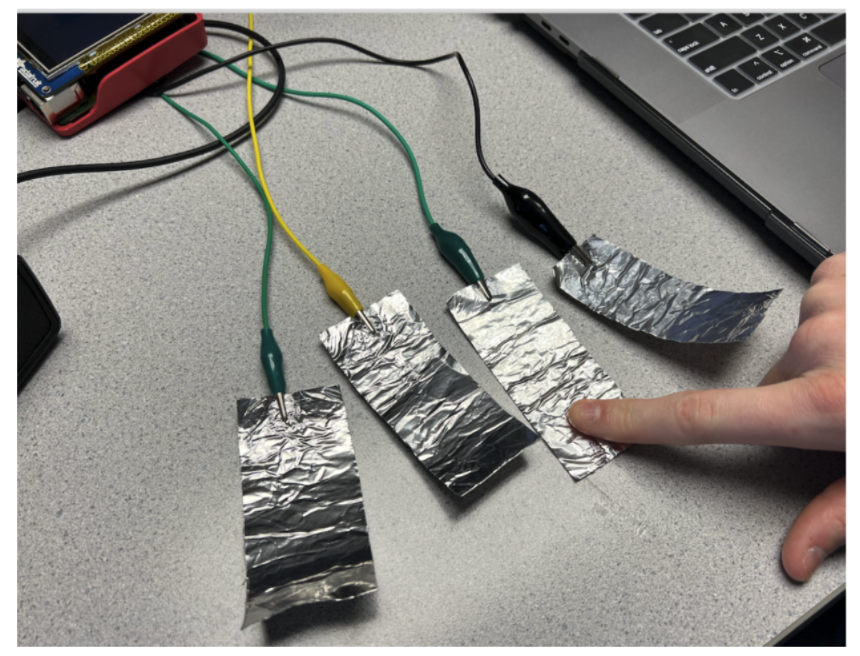

A feature to detect whether the driver has their hands on the wheel is also implemented. For this, we first considered using an analog force sensor. After some testing, it was determined that the sensors were not sensitive enough for this application - a voltage difference was detected only when squeezed. Although this could be resolved via a voltage divider, we decided to try a simpler alternative: capacitive touch sensors. They detect a change in capacitance when someone touches the sensor, therefore only outputting a low or high state. Additionally, they provide flexibility in that they can be attached to conductive surfaces (aluminum tape) via alligator clips. This allowed us to increase the surface area for each sensor and cover a greater area of the steering wheel. Realistically, this would require a steering wheel cover with many sensors since a person may hold the wheel in any position.

For the purposes of this project, we will only use two sensors for each hand at the recommended 9 and 3 o'clock positions. If at least one of the surfaces is touched (i.e. high), then it is assumed that the person has at least one hand on the wheel. However, if none of the surfaces are touched (i.e. low), then it is assumed the person has neither hand on the wheel and an alarm is sounded. This is achieved by checking the values of each pin within a polling loop and triggering an alarm when all values are low.

Steering Wheel Detection | Aluminum Tape Connected to Capacitive Touch Sensor - Connect Car with OBDII Reader

Another feature we implemented was using real-time car speed data to determine whether to sound alarms. The data collection was achieved through an OBDII scanner, which is a port found in all cars sold in the USA after 1996. It can be used to access live data from the car, including speed. Using this, if the speed was greater than 0 and the driver was distracted, the system would issue an alarm. However, if the car was not moving (parked, stopped in traffic) and the driver was distracted, the system would not issue an alarm. This is to prevent the driver from being alerted when the car is safely stopped.

We encountered several issues when purchasing an OBDII reader. The only car we had access to was an Alfa Romeo Giuliua - an Italian car. However, after reading through some forums, we discovered that since it was sold in the USA, the connection had to be standard. We first ordered an OBD2 reader compatible with wifi, but after some time, we were unable to connect. Instead, we ordered an OBD2 reader compatible with Bluetooth as an alternative. We used the same OBD install and BLE connection setup as a team from the previous year, which was very straightforward. As a brief summary, we enabled Bluetooth connection, scanned and connected to the OBDII, and extracted the speed using a simple script. The Wifi should work, but we would recommend using Bluetooth compatible OBDII, as it is well documented and much easier to implement.

- Multiprocessing

Facial recognition and facial feature extraction is a huge burden for the Raspberry Pi, especially since Python can only use at most one core for one process. With this in mind, we decided to take a multi-process approach to optimize the performance of drowsiness detection. We split the original program into three processes. The

img_put()process is used to read the input from the camera and pass each frame to theface_process()process which extracts the facial information and sends the result to the img_get process. Theimg_get()process displays the result on the output.In theory, it is also possible to open multiple

face_process()processes by using the Poll function to make face processing faster. However, in the actual test, because the performance of Raspberry Pi is really limited and we need to run other programs at the same time, the excessive resource consumption caused by opening multiple face_process processes will make the overall effect degrade. - Alarm System

For each distracted feature, a different threshold is set that must be met before an alarm is triggered. This threshold is determined by a counter variable that is incremented by 1 for each successive frame the distracted feature is detected. Once this counter variable reaches a predefined threshold, a word indicating the distracted feature is printed and written to a file. For example, if the driver was looking away, we use

f.writeto write “EYE_OFF_ROAD” to the file. The last word from this file is extracted, and the corresponding alarm is triggered. We usedespeakto audibly sound an alarm through speakers we connected to the audio jack. Once the distracted instance is detected, the audible alarm is sounded once, and then the counter is reset. - Pygame Interface

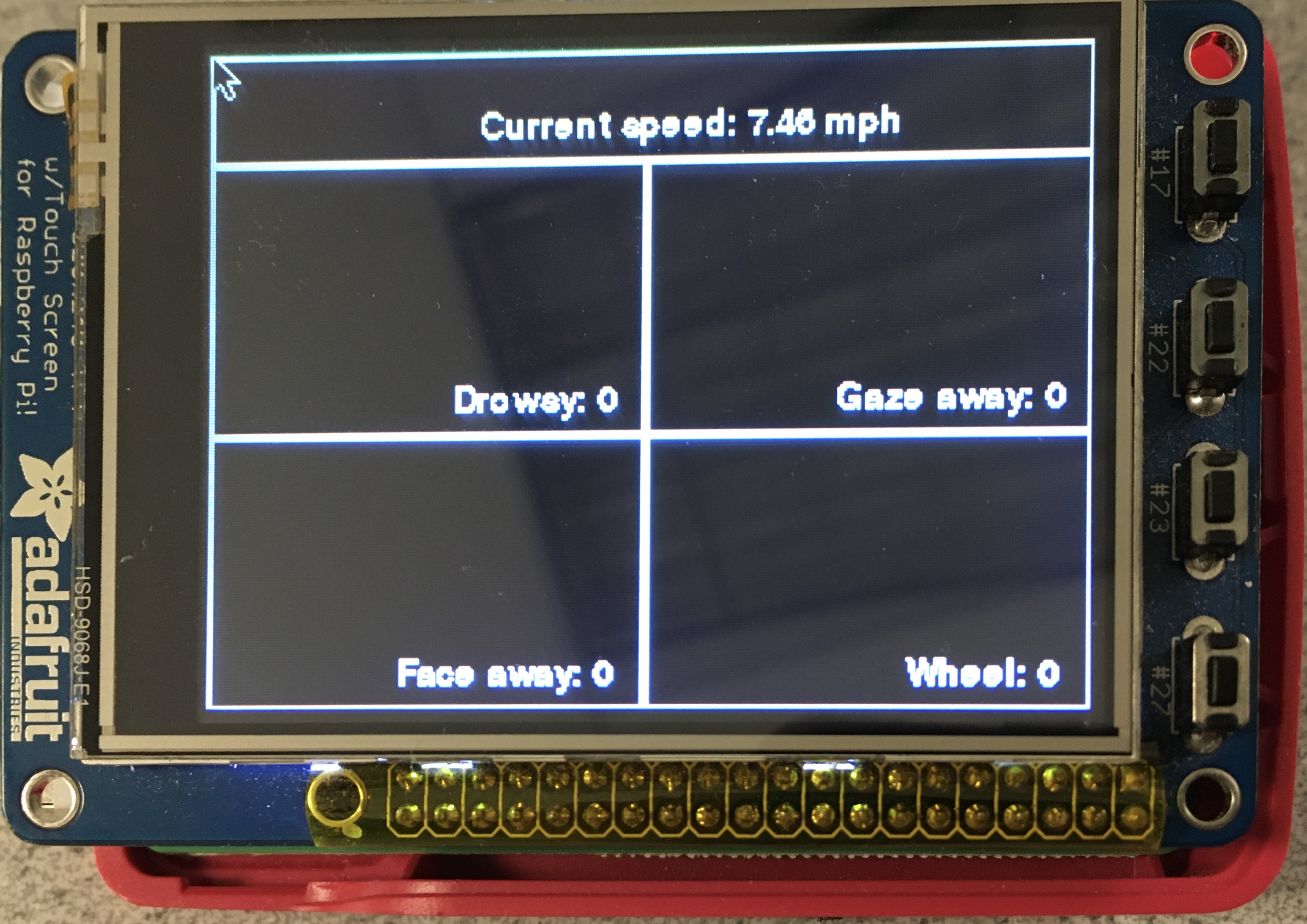

Using pygame, we created a basic interface to display on the piTFT. We split the screen into five sections. One of these included a small section at the top of the screen to show/update the speed of the car. The remainder was separated into four quadrants via the

pygame.draw.line()function and each quadrant was devoted to one of the four main features (drowsiness, facing away, eye tracking, and wheel detection).Each quadrant is initialized as black, and if distracted driving is detected, the appropriate quadrant is filled with red and a statement printed to the screen. At the bottom of each quadrant, there is also a counter, which records the number of instances of each distracted driving event. A small section was left at the top to show/update the speed of the car. Finally, a quit button was implemented to allow the user to cleanly exit the program, using one of the physical piTFT buttons.

Pygame Interface

Drawings

Result

Our demo worked well, with all major features performing as intended. The multiprocessing reduced the load on the system, allowing it to run with no noticeable impact on performance. The drowsiness, facing away, and steering wheel detection all worked seamlessly. The eye tracking worked quite well, although it would need to be tested in a car before being implemented in any real system. For example, someone could be adverting their gaze from the road to check their rearview or side mirrors. The number of seconds before sounding an alarm, as well as the threshold, D, for |xcenter of eye - xcenter of pupil| > D would need to be tested and tuned to work for a real driver.

For the demonstration, we used a speed log obtained earlier from the OBDII scanner. The speed values were read as if in real-time. This worked well, although in retrospect, we should have obtained a log that did not include as many starts and stops (we recorded it in a parking lot). If a potential distraction was detected, but the speed went to zero before the alarm threshold was met, the counter was reset. This made it important to consult the speed when showing the features during the demonstration. Instead, we should have obtained two logs for simplicity: one with the car stopped and one with the car moving. Finally, when the alarms were triggered, the piTFT screen would update appropriately, but the sound from the speakers did not work. Despite these minor issues, we were able to meet our initial goals with these features showing promising results.

Conclusion

Overall, we were pleased with our demonstration as our system worked quite well. The drowsiness, facing away, looking away, and steering wheel detection worked as expected. The display on the piTFT was simple and effectively showed the triggering of alarms, visually alerting the driver. Some issues we encountered included the sound alarm not working and the eye tracking being a little finicky. The thresholds for eye tracking needed to be tuned more to resolve this issue. Additionally, when preparing for demonstration, we should have obtained better speed logs.

Future Improvements

In designing the alarms, the last word written to the file is extracted. Where there is some latency when retrieving the last value, this could become an issue if two distracted events are detected simultaneously. Using a queue is a possible alternative, where the alert at the front of the queue is popped and the appropriate alarm triggered. Every time a new alert comes in, it would be appended to the end of the queue.

For the steering wheel detection, it was pointed out during the demo that someone could trick the system and drive with minimal contact of one hand (holding one finger to a sensor). This could be adjusted by requiring at least two sensors be touched.

One potential extension of the OBDII would be to consider the direction of the car's travel. If the driver was reverse or parallel parking, this would require the person to look behind them, thus triggering an unnecessary alarm. Either we could use the OBDII to determine when the car is in reverse and disable the alarms, or there could be a physical button for the driver to momentarily disable the alarms (for 2-3 minutes), while the person parks.

Where all of these detectors (except steering wheel) require a camera, the performance is expected to markedly decrease in the night-time. This presents an issue, since drivers are more likely to be drowsy at night, and despite 60% less traffic on the roads, more than 40% of all fatal car accidents occur at night [4] . Since it is considered a distraction for a driver to have cabin lights on in the car, we would have to implement a night vision camera.

Work Distribution

Yu Zhang

yz2729@cornell.edu

Designed the overall software architecture and implemented functions including drowsiness detection, facing away detection, multiprocessing, OBDII, pygame display

Sirena Depue

sgd63@cornell.edu

Built the hardware system and implemented functions including: eye tracking, steering wheel detection, OBDII, and pygame display

Parts List

| Parts | From | Cost |

|---|---|---|

| Raspberry Pi | Lab | $0.00 |

| Raspberry Pi Camera | Lab | $0.00 |

| 12-Key Capacitive Touch Sensor | Lab | $0.00 |

| Speaker | Used our own | $0 |

| Aluminum Tape | Amazon | $5.98 |

| Bluetooth OBDII Scanner | Amazon | $20.99 |

Total: $26.97

References

- Real-Time Eye Blink Detection using Facial Landmarks

- Real-Time Eye Tracking Using Opencv and Dlib

- MPR121 Capacitive Touch Sensor Documents

- Night Driving

- Optimizing Opencv on the Raspberry Pi

- Distracted Driving Monitor

- Raspberry Pi: Facial Landmarks + Drowsiness Detection with Opencv and Dlib

- Miscellaneous Image Transformations

- Proximity Capacitive Touch Sensor Controller MPR121

- TymanLS/PI-Cardash: A Raspberry Pi Based Car Dashboard

- Pi Camera Documentation

Code Appendix

The complete code is hosted in Github, here we just show the code for face_process.py.