Workout Buddy

ECE5725 Final Project

By Ishaan Thakur (it233) and Shreyas Patil (sp2544).

Workout Buddy Ad!

Introduction

The COVID-19 pandemic has changed the way the world works! Not only have people’s jobs moved virtual, but also a lot of recreational activities have adapted themselves to an online platform. One of the activities that most people have not been able to participate in are personal gym training sessions. With this project, we aim to create a virtual gym trainer, ‘Workout Buddy’, which comprises a Raspberry-Pi based system that can let a user decide which body part they want to work on, and then starts counting and recording their activities while displaying the number of repetitions on the screen. Once the user is done with their workout, they can choose to send an email with their entire workout report to themselves with just a click on the touchscreen interface.

Project Objective:

Our Raspberry Pi based system allows one to get their workout done without going to a physical gym. The system not only has a wide array of exercises that target a person’s key muscle groups over the entire body - arms, shoulders, abs, chest and legs - but also an email based monitoring system to let the users keep track of their exercises. Through this project we wish to provide a solution to promote fitness among people who cannot go to a physical gym either due to the pandemic or busy work schedule.

Design

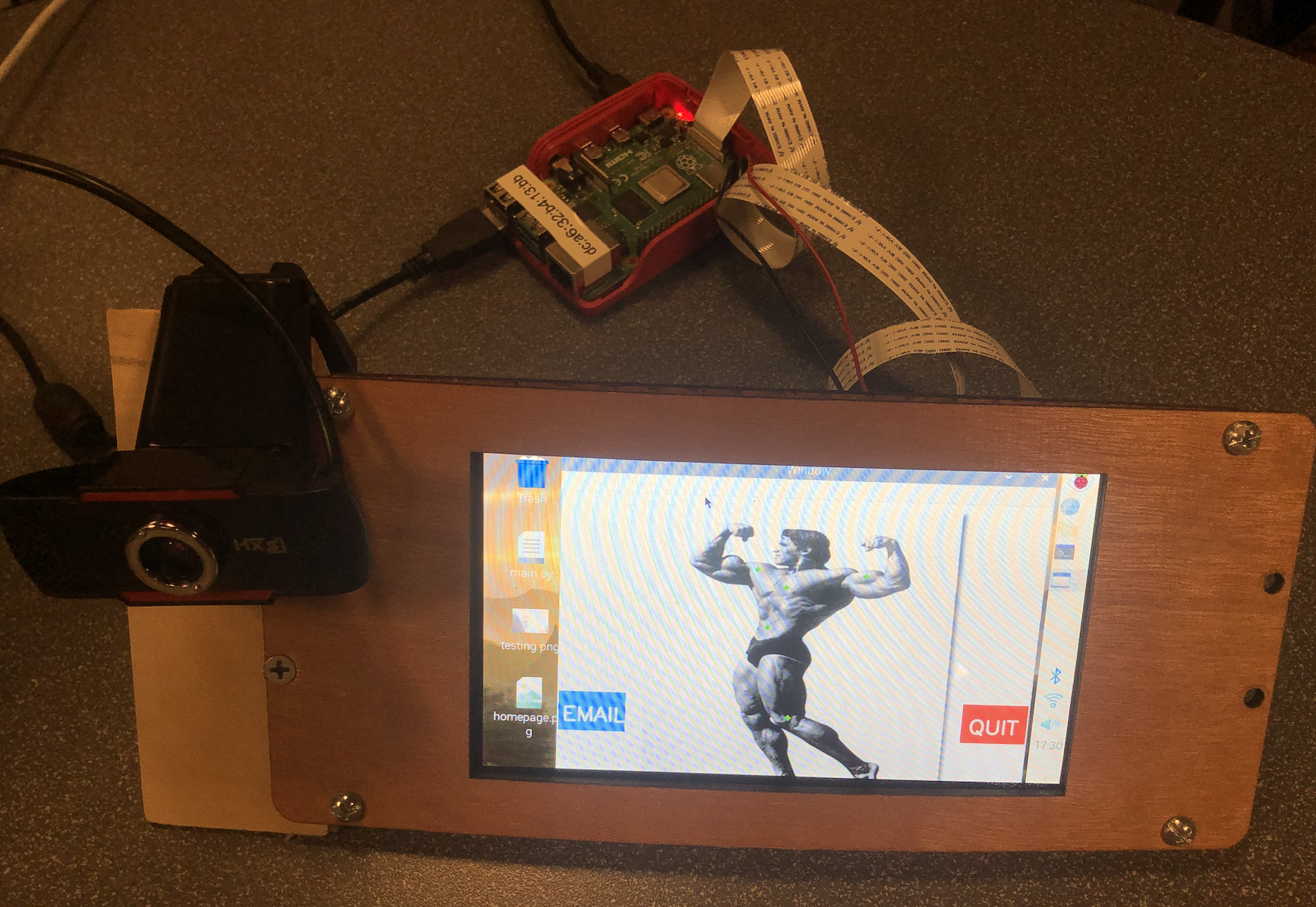

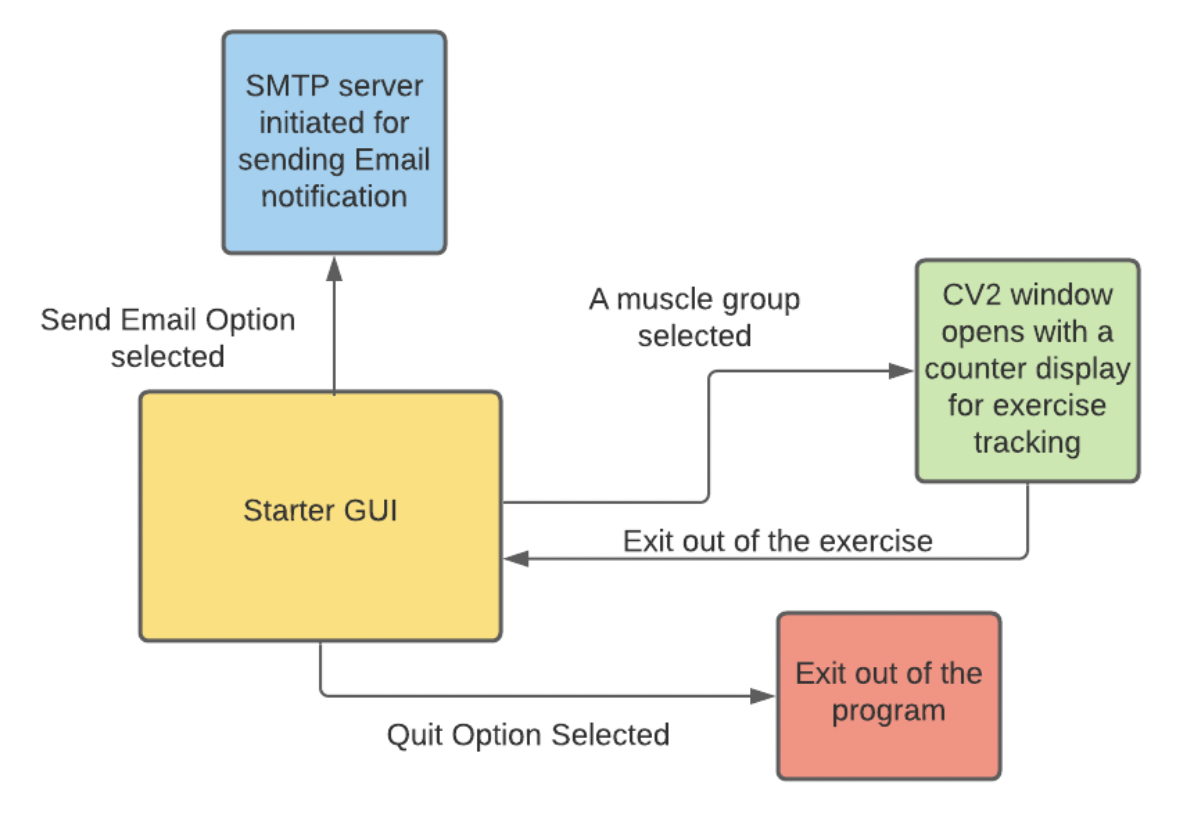

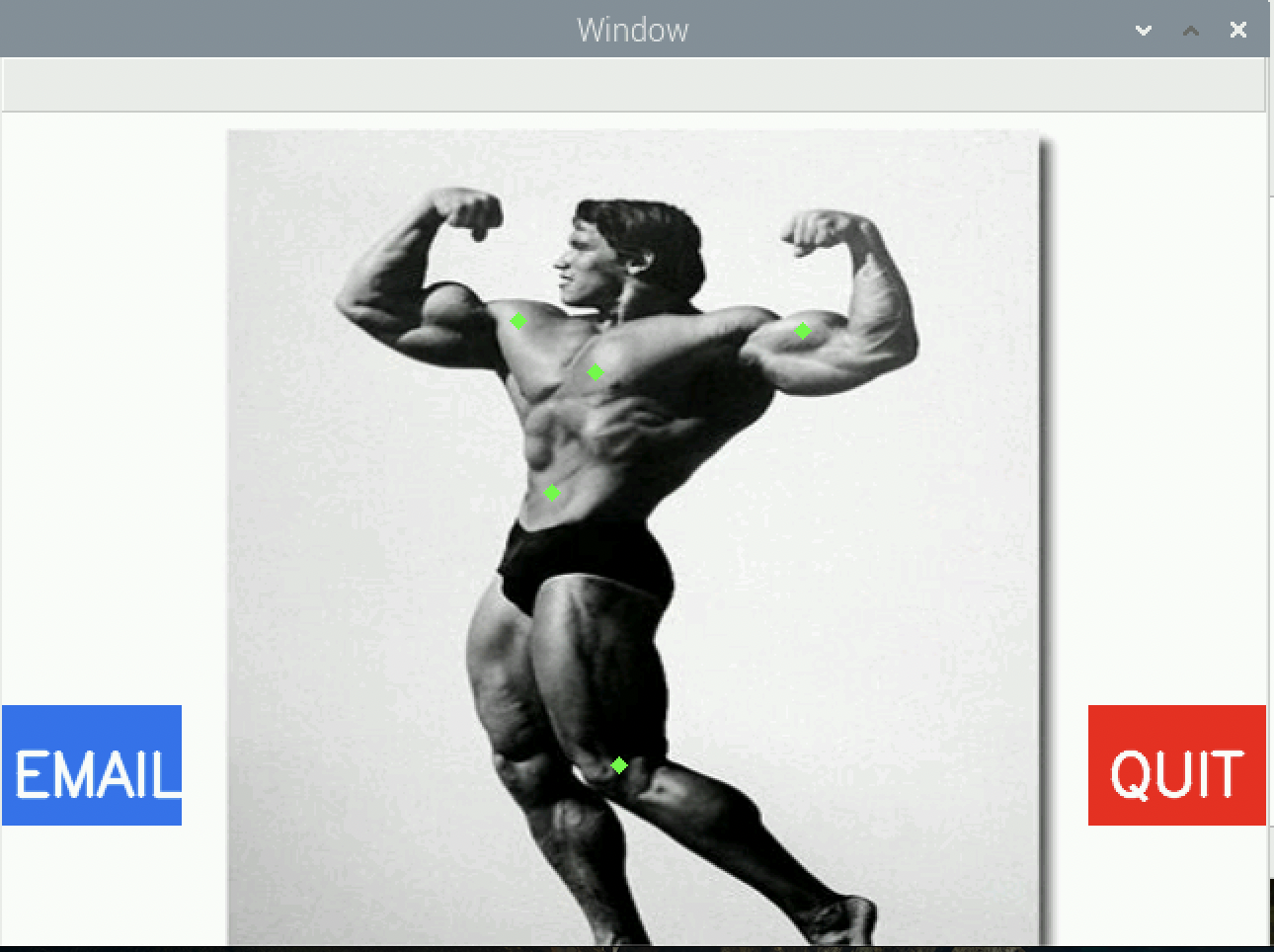

This project is designed and implemented completely on a Raspberry-Pi 4 computer. When the user first starts up the Workout Buddy program, the user is greeted with the homescreen of the program on the 7-inch touchscreen interface. This homepage provides the user with the option to choose a body part the user wishes to work on. When the user touches a specific body part on the touchscreen, the Raspberry-Pi launches another window where the exercise gets recorded and tracked. The Raspberry-Pi then opens the camera and records the video of the user while displaying it on the screen as a feedback for the user. Every frame of the video is parsed to identify the human in the frame. Once the human is identified, the Raspberry-Pi performs pose estimation on the human’s body. Each different part of the human body is segmented, and the movement of different parts of the body tracked using segments and joints created. Using this, the program is able to count the number of repetitions of the selected exercise. The segmented parts along with the count of the repetitions is also displayed on the screen. Once the user is done with that particular exercise, he/she can hit the quit button on the particular exercise window using the touchscreen interface. This takes the user back to the homepage, where the user can choose to either work on another body part, or complete the workout. In case the user decides to complete their workout, there is an option on the homepage to email the details of the workout to the user. Clicking this button will send an email a detailed report of how many repetitions of which body part has been worked on in that particular session. There is also a quit button which will then quit the program.

The starter GUI has a picture of Arnold Schwarzenegger where the user can tap the respective body part to open the respective exercise tracking application (a CV2 window).

Hardware

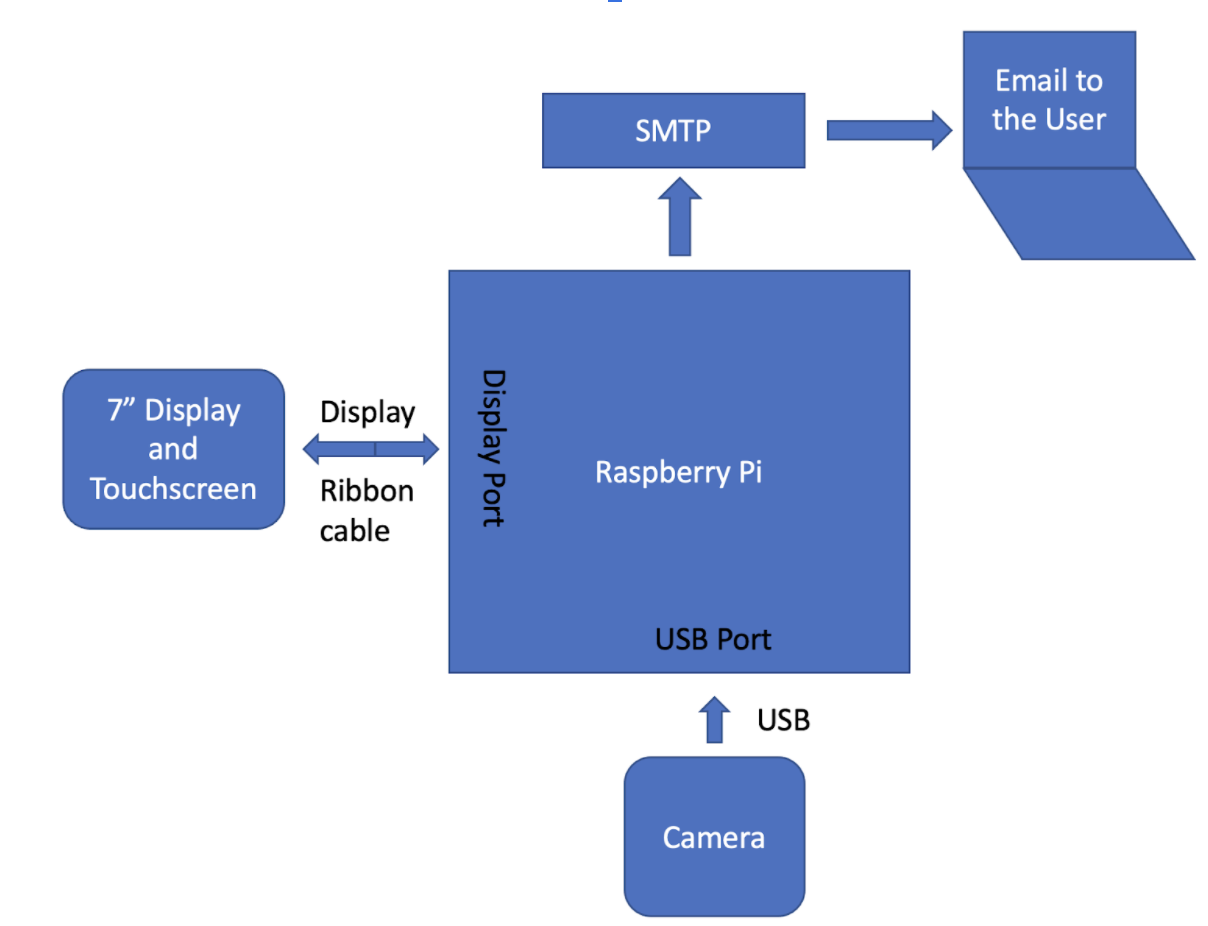

The hardware used for this project is relatively simple. The complete processing of the entire project is done on the Raspberry-Pi 4 device with 2GB RAM and a 16 GB SD card that runs on an ARM72 processor. For the video input, the Raspberry-Pi used a camera to input the video of the user exercising. For the human computer interaction, the project uses a touchscreen display which displays the output of the program, and uses the touchscreen input to take the user input for exercise selection and accessing the email and quit buttons.

Camera

Initially, we started out by using a Raspberry-Pi Camera module v2, which uses a 8MP camera which can record a 1080p video at 30fps or a 760p video at 60fps. It uses a Sony IMS219 CMOS sensor. To connect the camera, the PiTFT was disassembled, to expose the ports on the Raspberry-Pi. The camera, which has its own ribbon cable, was then connected directly to the Camera port on the Raspberry-Pi. It is important to note that the ribbon cable can be put in both ways, however it will only work one way as the metal contacts are present only on one side. Thus, it is important to observe clearly on which side the contacts are present, and then insert it into the connector, followed by pushing down on the black lever of the connector to hold the ribbon cable in place firmly. Once we had done this connection, we tried to capture video on the Raspberry-Pi using a sample code to test the camera functioning. However, this did not work as the camera module had not been enabled in the interfaces tab of the Raspberry configuration settings. The following steps were followed to rectify this:

- Click on the main menu of the Raspberry-Pi on the desktop window

- Open Preferences and then click on Raspberry-Pi Configuration

- Go to the Interfaces Tab

- Check the 'Enable' box in front of the camera

We tested by running the sample code again, and this time we were able to get the camera feed display on the screen.

When we were trying out the camera in further experiments, we noticed that the camera was very sensitive to light and also we did not have a very good way to mount the camera. This was causing an issue with posture estimation, and thus after speaking with the TA, we decided to try out a separate USB camera which had a mount built on its body. We connected the USB camera, and ran the sample code, but we were seeing the feed from the Raspberry-Pi camera. So we shutdown the entire system, disconnected the Raspberry-Pi camera, and restarted the system. This time, we were able to get the feed from the USB camera. An alternate way to get this working would have been to change the variable name in the openCV command. Initially the command we were using was cv2.videocapture(0) which could have been changed to cv2.videocapture(1).

Touchscreen

We started off the Testing using the PiTFT. One of the requirements of this system would be to display everything on the screen of the Raspberry-Pi and not on the desktop monitor. We initially developed our openCV programs while the desktop monitor was connected to the Raspberry-Pi using HDMI. This worked perfectly fine, as the openCV framework looked for a ‘startx’ server to run on. Next, we tried running the openCV window on the piTFT, but this resulted in an error saying ‘Startx server not found’. We tried changing the display environment variables to fb1 as in Lab 2, while keeping the HDMI connected. This showed the same error. We also tried to disconnect the HDMI and change the variable to fb0, this also kept showing the same error. Upon consultation with Prof. Skovira, we were told that we would need to configure the Raspberry-Pi to display the desktop on the piTFT. In order to do this, we followed the following steps to install the X11 server on the Raspberry Pi:

- Installed the frame buffer driver:

sudo apt-get install xserver-xorg-video-fbdev - A config file was created in

/usr/share/X11/xorg.conf.d/99-fbdev.confwith the following contents:Section "Device" Identifier "myfb" Driver "fbdev" Option "fbdev" "/dev/fb1" EndSection - Reboot the RPi

Once this was done, we were able to see the desktop bootup on the PiTFT.

While we were running the CV2 window on the PiTFT, we noticed that the screen resolution of the PiTFT, 320 x 240 pixels, was too small for our CV2 window which was setup at 640 x 480 pixels. We could shrink the window size, however this would be too small of a display to view the counter as well as the video of the person exercising. To solve this problem, we switched over to a 7-inch touchscreen for the Raspberry Pi, which we borrowed from the lab. This screen had a resolution of 800 x 480 pixels and was sufficiently large for displaying a CV2 window that would display all the parts of the program clearly without squeezing them into a tiny area. The 7” display would connect into the same display connector of the Raspberry-Pi, and would draw 5V power from the Raspberry Pi pins (5V from Pin 2, and GND from Pin 6). Setting up this display did not require any additional hardware or software manipulation.

One issue we encountered was that if we ssh into our RPi from a separate computer and try launching a CV2 window, we get an error message of ‘startx server not found’. This error was fixed by explicitly declaring the environment variable for Display as follows: os.environ[‘DISPLAY’] =‘DISPLAY’.

Software

Pose Detection

For pose detection aspect of our project we initially used the OpenPose library [1]. This framework is responsible for detection of a human body, facial structure, hands and feet keypoints (refers to a collection of 2d position, scale and orientation of an image) in a single shot manner (prediction is made for each single frame). However, this library produced a very low 1 fps while processing each frame of the image.

We hence decided to switch to the MediaPipe framework [2] developed by Google for pose detection.

The installation for this library on RPi was done using the following command: sudo pip install mediapipe-rpi4.

This framework uses the BlazePose model [3] that Google presented in CVPR 2020. The pose detection method uses an ML model for inference of 33 2D-landmarks of a human body from a single image frame. This ML model holds several advantages over other keypoint detection algorithms as the model is able to localize a higher number of keypoints thus better suited for fitness applications, where the keypoints change at a much faster rate between each frame. Moreover, current pose tracking algorithms rely on GPU for getting decent real-time performance, which mediapipe is able to achieve just by relying on CPU. By using this library we noticed a significant improvement in the framerate recorded (6 FPS) by our system.

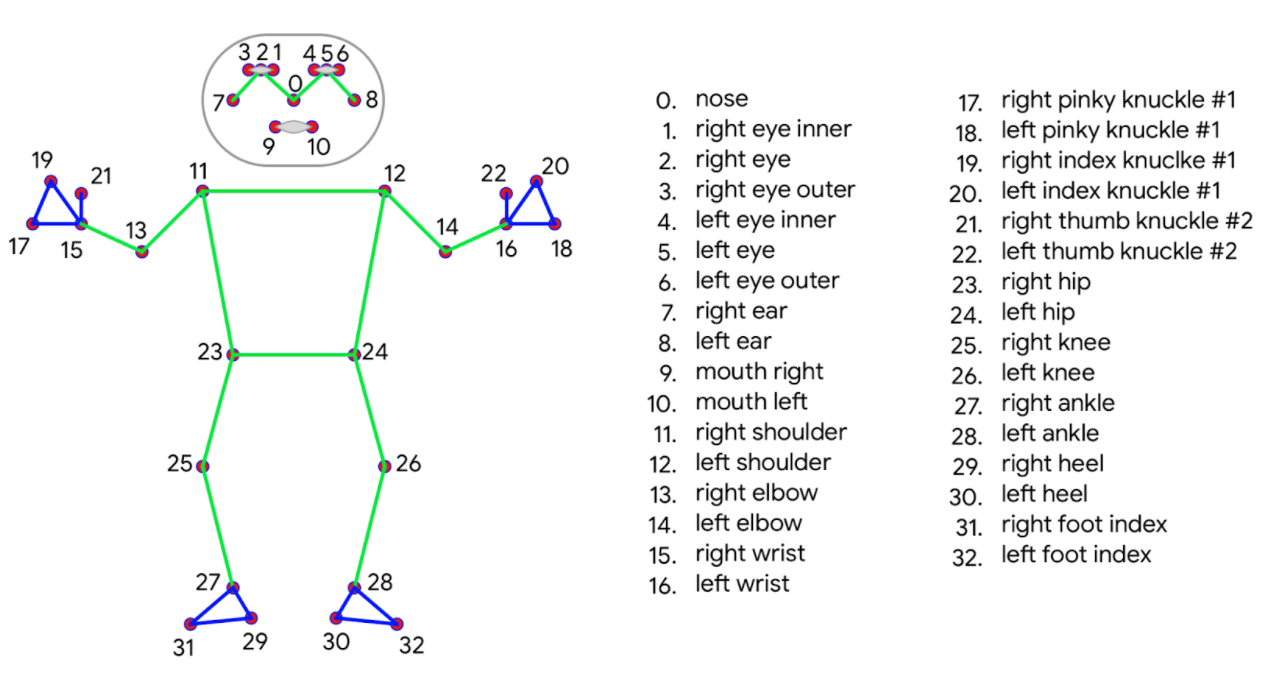

The next set of advantages of MediaPipe is the human body topology used for inference. Previous pose tracking methodology have relied on using COCO topology [4], which comprises 17 landmarks spanning arms, legs, face structure and torso. A downside of this topology is that localization only happens around the ankle and wrist area, but does not capture information about legs and arms orientation [5]. It is important to capture a larger keypoint space in order to track motion activities such as dancing and work out activities. BlazePose ML model improves upon this by creating a novel topology comprising 33 keypoints [6]. The same is shown below:

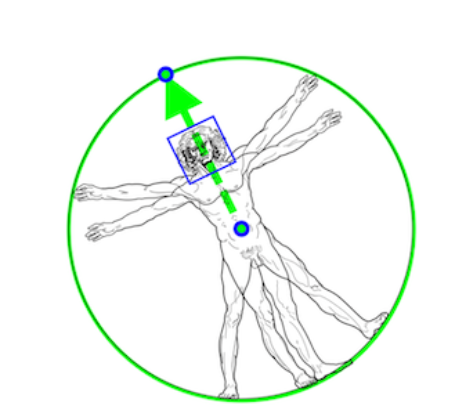

Before running the landmark detection model using this topology, MediaPipe runs a pose detection algorithm for locating the person. This detection methodology is based on the BlazeFace model that Google came up with for face detection and modified it to account for person detection. The algorithm involved finding two keypoint that contain information about the center of the person and scale and orientation of the circle drawn around the human body. Taking inspiration from Leonardo’s Vitruvian Man [7], Google modified the BlazeFace model to classify where the center point of the hips of the person is, radius of circle that circumscribes the entire human body as well as the the angle at which a line is inclined which connects the center point of the hips and shoulder [6]. The following figure shows a Vitruvian Man aligned via 2 keypoints that BlazePoint predicted along with the bounding box for the face.

Using the MediaPipe Library we are directly able to obtain the landmarks for different joints of a human body and use this for tracking different exercise movements. How this works is we first capture individual image frames from the camera and convert them from a BGR image (default image representation read by OpenCV) and convert to an RGB image. This newly generated image is then passed into a mediapipe function, mp.solutions.pose.process(), for pose and landmark detection. Over here itself we get to define the confidence level (a measure of how confident a system is in making a prediction) for landmark detection and pose tracking. A lower confidence level results in inclusion of more false positives and a higher confidence level means lesser false positives but also an extremely tight margin of error. Based on trial and error, a confidence level of 50% worked the best for both landmark detection and pose tracking.

After the predictions are made on the particular image frame, we can extract the landmarks from the respective image frame and accordingly implement a tracking mechanism for different exercises.

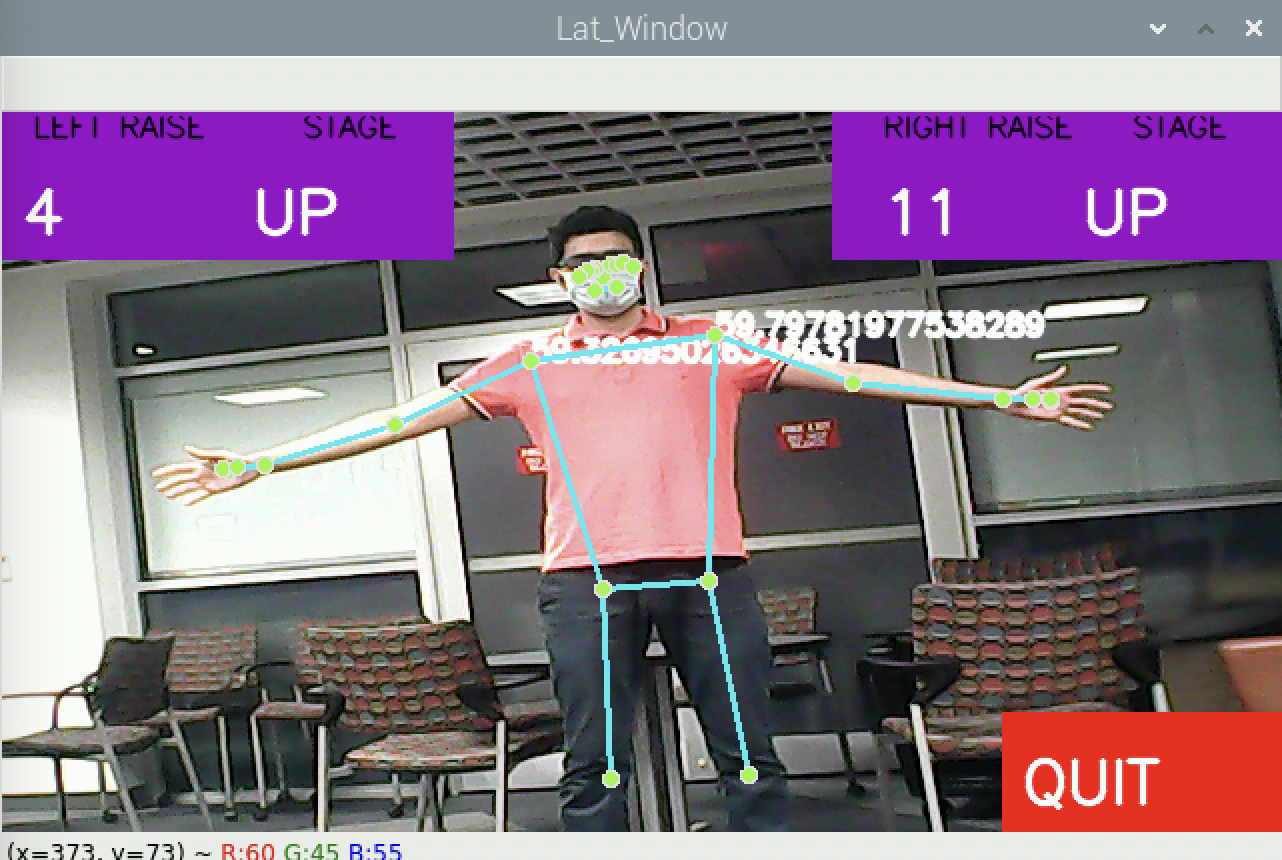

An example of how the pose tracking and landmark detection looks like for a Lateral raise exercise is as follows:

Exercise Algorithm Design

After we were able to get a complete set of landmarks from the MediaPipe Library, the next task involved using these landmarks to identify how each body part was moving as the frames progressed. A main program called cv2_GUI.py was created which would run the homescreen of the program. Another program called util.py was created which housed the functions used for tracking the body part movements. This program consisted of two functions:

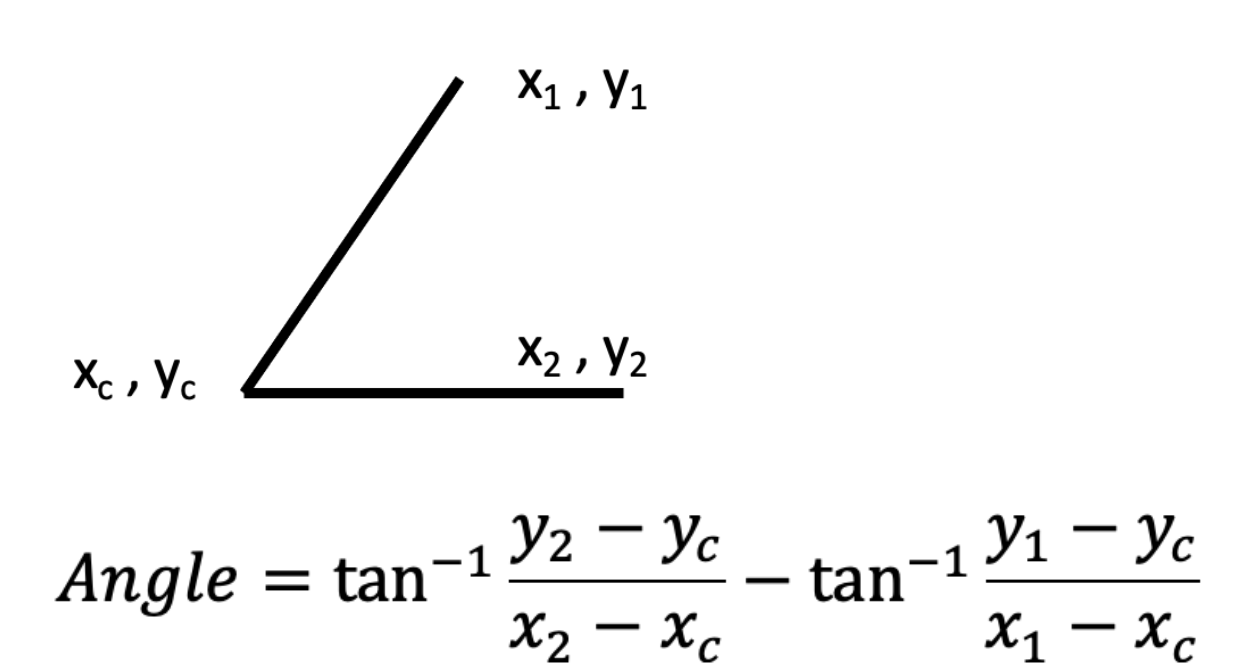

- Angle Measurement: The angle between any 3 landmarks is calculated in this function. This is done using the inverse tangent function in the numpy library by using the x and y coordinates of each of the two segments to get their absolute angles, and then subtracting the two absolute angles to get the angle between them. Whenever the angle becomes obtuse, we subtract 180 to get the acute angle between the two segments.

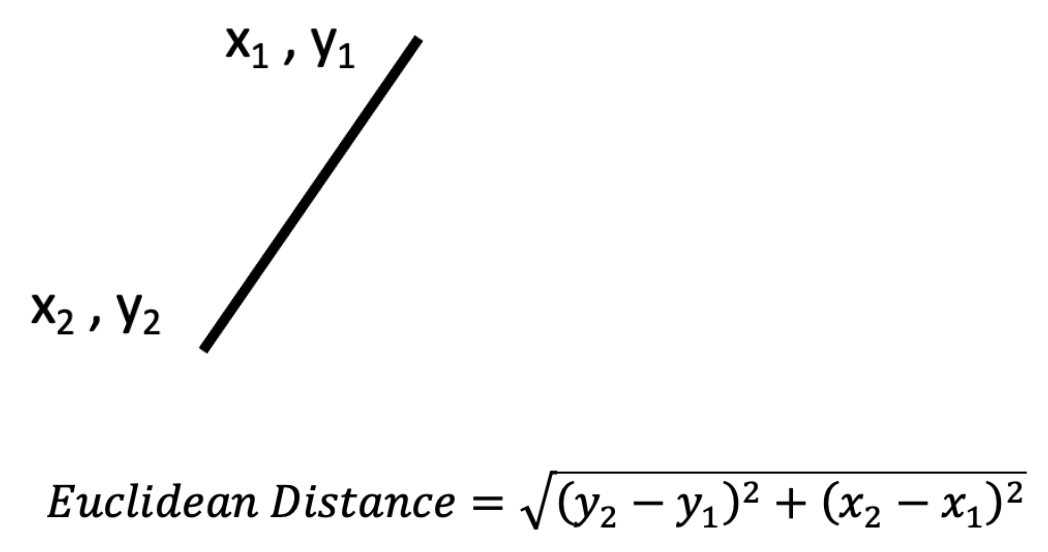

- Euclidean Distance Measurement: this function calculates the distance between any two landmarks by using their coordinates (x1, y1) and (x2, y2).

Once we have the ability to calculate the euclidean distance and the angles between any of the landmarks, we can compare these values in successive frames to determine the motion of the body parts. We used this concept to track 5 different exercises for 5 different parts of the human body. Each function was written in 5 separate python scripts:

- ChestFly_Counter_mod.py: This script contains a function that checks for the number of repetitions of chest fly that the user performs, where he brings his arms close together before making them wide again while holding weights in the hand. In order to calculate this, we calculate the euclidean distance between the two wrists of the user. If the distance is below a lower threshold (100 pixels), then the arms are considered to be in the ‘close’ state. If the distance is more than an upper threshold (250 pixels), then the arms are considered to be in the ‘wide’ state. Based on this, whenever the state transitions from close to wide, the chest fly counter increments by 1 and this is how the number of repetitions are tracked.

- Curl_Counter_mod.py: The bicep curls exercise module tracks the number of curls performed by the user on either arms independently. In order to calculate this, the angle between the shoulder, elbow and wrist on each arm is calculated. If this angle is greater than an upper threshold (160 degrees), then the arms are in the ‘down’ state. If the angle is less than a lower threshold (30 degrees), then the arms are in the ‘up’ state. Each time there is a transition from the up state to the down state, the counter is incremented for that particular arm, and this is how the number of repetitions of bicep curls for each arm are tracked.

- Lat_Counter_mod.py: The lat raises exercise module tracks the number of shoulder lat raises performed by the user on either side independently. In order to calculate this, the angle between the hip, shoulder and elbow on each arm is calculated. If this angle is greater than an upper threshold (50 degrees), then the arms are in the ‘up’ state. If the angle is less than a lower threshold (30 degrees), then the arms are in the ‘down’ state. Each time there is a transition from the up state to the down state, the counter is incremented for that particular side, and this is how the number of repetitions for lateral shoulder raises are counted.

- Squats_Counter_mod.py: The squats exercise module is launched when the user presses on the legs exercise on the homepage. In order to calculate this, the angle between the hip, knee and ankle of a user is calculated. If this angle is less than a lower threshold (120 degrees), then the user is in the ‘squat’ position and if the angle is above an upper threshold (130 degrees) then the user is in the ‘straight’ position. Whenever the user transitions from the squat position to a straight position, the counter for the squats is incremented and eventually this is how the program is able to keep track of the number of squats the user has performed in that session.

- Crunches_Counter_mod.py: Whenever the user clicks on the abs on the home page, the crunches counter program opens up. In order to calculate the number of crunches, the angle between the user’s knee, hip and shoulder is calculated. If this angle is less than a lower threshold (80 degrees), the user is in the ‘down’ state and if this angle is more than an upper threshold (90 degrees), then the user is in the ‘up’ state. Whenever the user transitions from up to down state, the counter is incremented. This mechanism is used to keep a track of the number of crunches performed by the user in the session.

Emailing Tracked Information

The next task involved setting up an email functionality for sending mail to the user once they are done performing their workout. For this we decided to use the SMTP (Simple Mail Transfer Protocol) server instance with a secure connection for sending email to the address specified in the message body. This implementation is based on the tutorial highlighted in [8].

Python is able to send emails using SMTP via the built-in smtplib library, which uses RFC 821 protocol. The first step involved setting up a Gmail account that our Python code can access and will use as the sender email address for sending emails to the user. The next step involved adding encryption to the SMTP connection before we send emails. For this we use SMTP_SSL() to add an SSL (secure socket layer) link between the SMTP connection established between the sender and receiver email address. The subsequent step after initiating a secure SMTP connection, is to send email, which we were able to do via the sendemail() function.

We moreover wanted to send email bodies having customizable fonts and structure. This could be done by converting the email body to an html text that can be modified to have the desired customizations. For this we used the MIMEText() object for storing html body and attaching it to the email body that is sent via the sendemail() function.

Result and Conclusion

Our team was able to implement all 5 exercise trackers successfully. We were able to keep track of the repetitions of each exercise quite precisely, and a detailed demonstration of this can be seen in the video below.

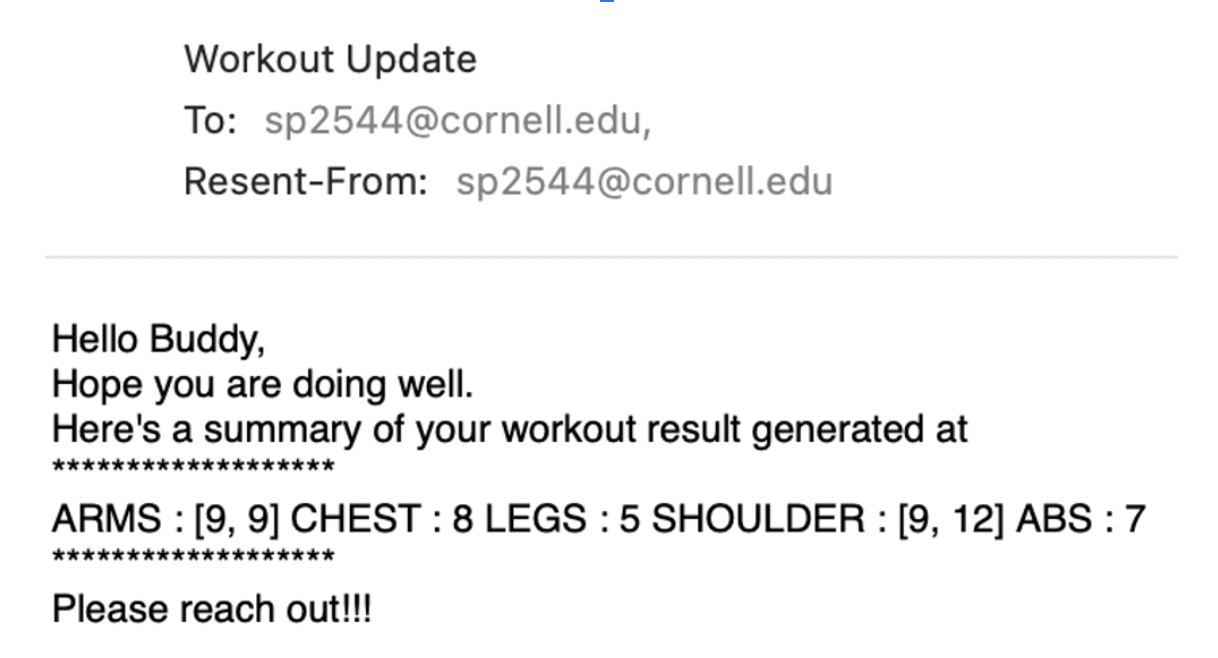

The algorithm was able to track the exercises as long as the parts it was tracking for that particular exercise were within the frame of the camera capture. We tried testing the program while performing the exercises at various speeds, and the program is able to keep track of the repetitions for all speeds at which a regular user would perform these exercises. The program does face an issue if there are multiple people in the field of view, and at times it tracks the movement of the person who is not the one of interest. We tested out the email functionality and are successfully able to receive a complete report of the workout session in the email. A screenshot of the email received is shown below:

After an email is sent, all the counters are reset to 0, and a new session begins. We verified that this indeed worked as expected. The Quit buttons on all windows also work as expected.

Work Distribution

Project group picture - Ishaan Thakur (left) and Shreyas Patil (right)

The project was for the most part collaboratively done during the lab sessions. Both members worked together on the testing of different Pose Estimation libraries and the implementation of the MediaPipe library. Ishaan worked on implementing the Chest, Arms and Leg exercises while Shreyas worked on the implementation of the Shoulder and Abs exercises. The GUI and the email functionality was developed by both members of the team together.

Parts List

- Raspberry Pi with SD Card - Provided in Lab

- 7” Touchscreen (Used) - $50.00

- USB Camera - $25

Total Cost: $75

References

[1] OpenPose Library[2] Mediapipe Library

[3] BlazePose: On-device Real-time Body Pose tracking

[4] Standard COCO Topology

[5] Google AI Blog: On-device, Real-time Body Pose Tracking with MediaPipe BlazePose

[6] MediaPipe Pose Detection Module

[7] Vitruvian Man

[8] Python Email Sending

Code Appendix

Our code can be found at the following GitHub Repo: LINK.