Music Motion

Sabrina Herman (sh997) and Emily Vick (ev238)

December 17, 2020

Sabrina Herman (sh997) and Emily Vick (ev238)

December 17, 2020

Our goal was to create an interactive dance game in which users can

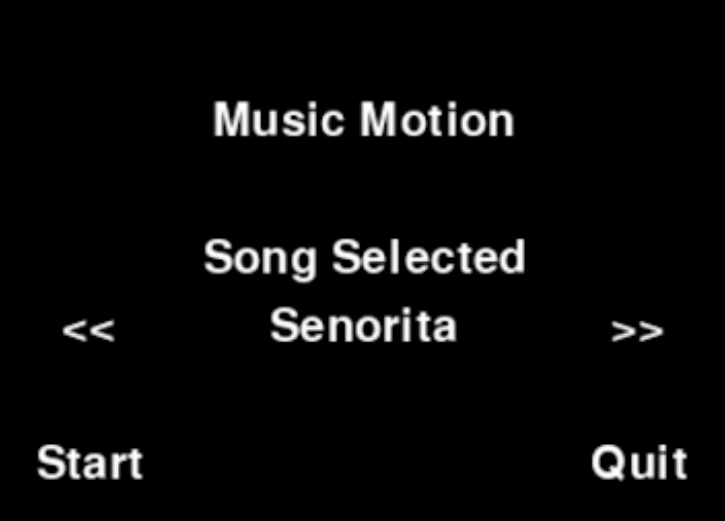

The project design consists of three main components: the game GUI, the accelerometer controllers, and the song movements. The game GUI uses PyGame, and the entire project is done in Python. The GUI has a home screen with options to choose a song, start, or quit. The player can navigate options on the home screen by touching the PiTFT screen or using an external mouse. Choosing a song will open up a game session displaying the current motion for each controller as well as upcoming motions. A value for the player's score will update depending on the type of motion and timing of the player.

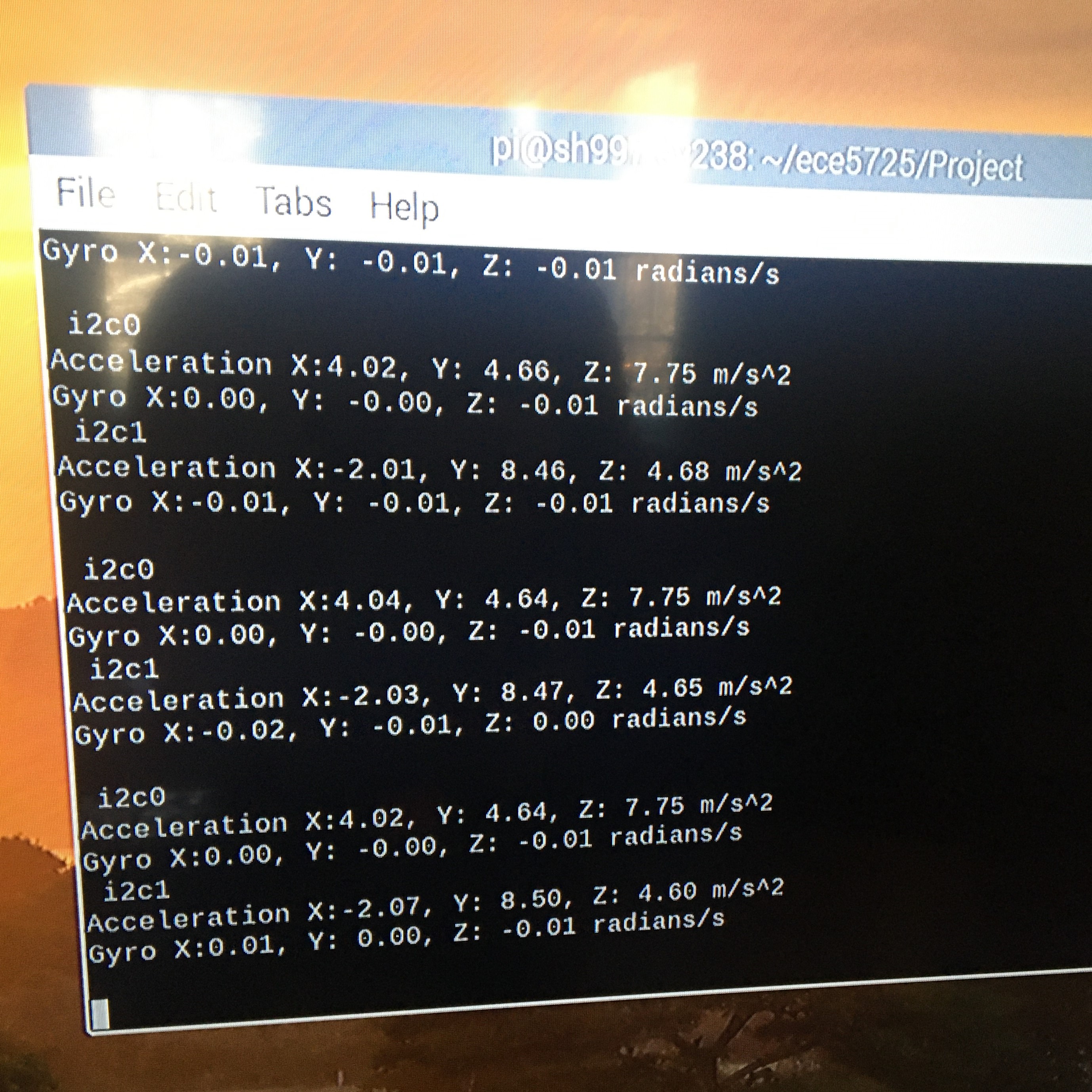

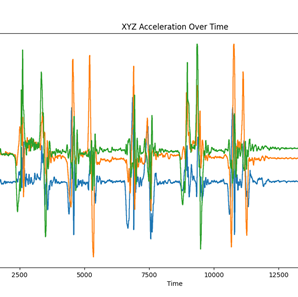

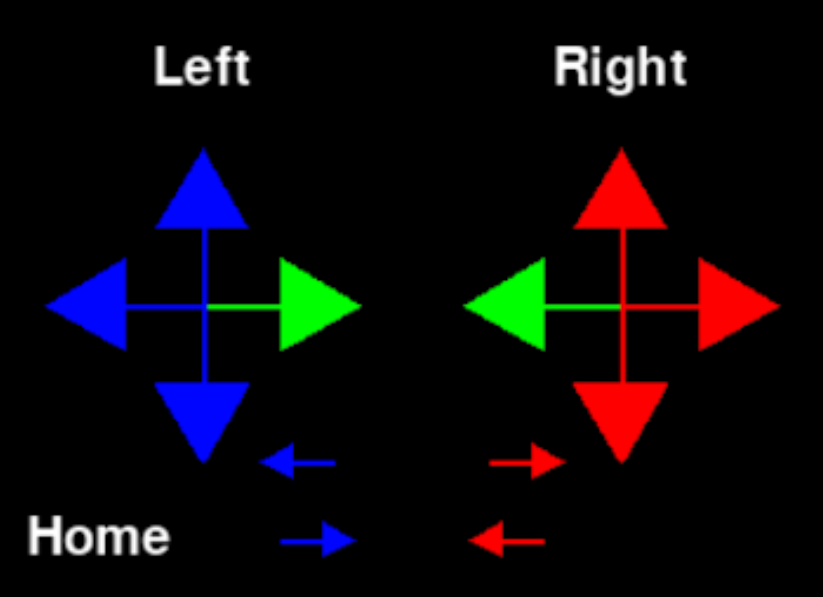

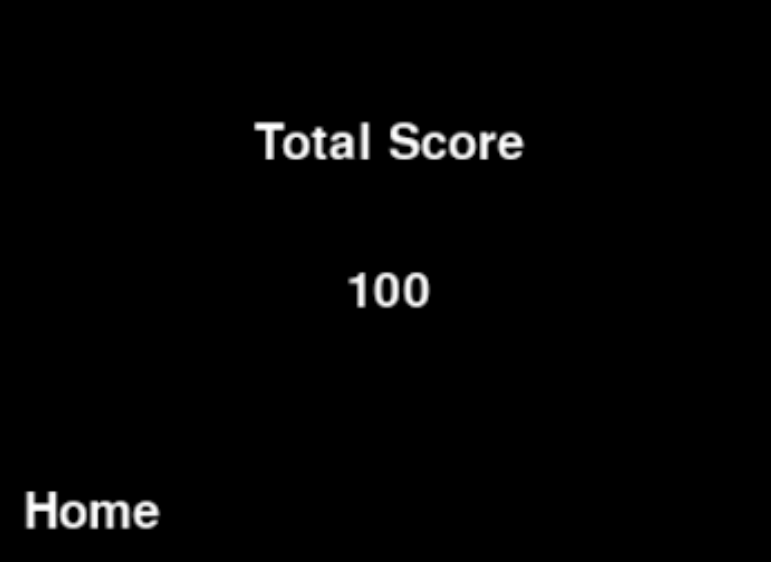

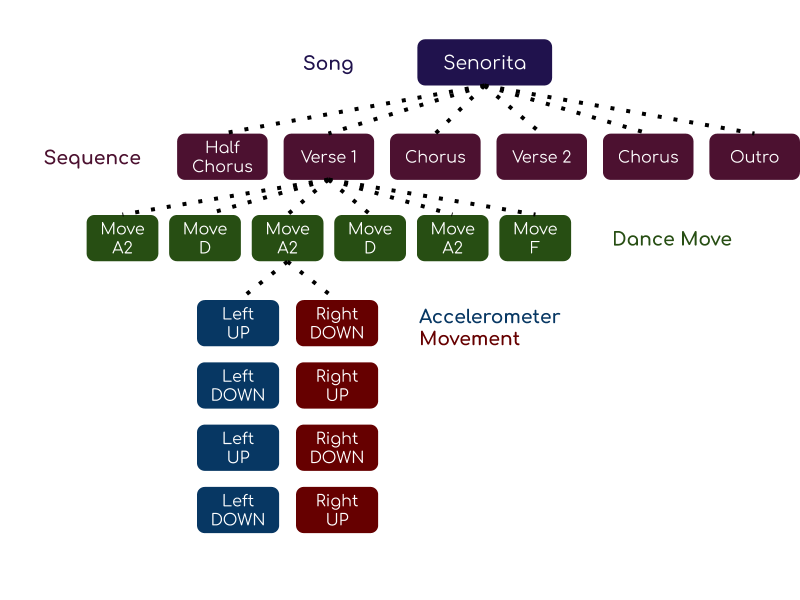

The two controllers match to the right and left hand. These are distinguished by color (red for right and blue for left on the screen). The logic and implementation are the same for both hands. The motion is read via accelerometers attached to each hand. The information from the accelerometers is processed and corresponds to a designated direction: up, down, left, or right. The song chosen is associated with sequences of “dance moves” which are four left and four right hand movements, for example: left up and right up, left down and right down, left up and right up, and left down and right down. Based on the current song movement and accelerometer direction, points may be added to the score. This score is displayed to the user at the end of the game.

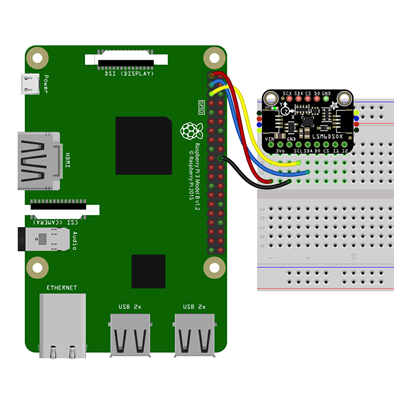

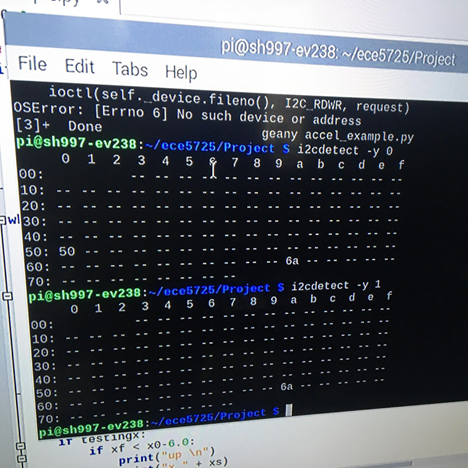

We chose to use the Adafruit LSM6DSOX 6 DoF Accelerometer and Gyroscope to detect the user’s motion throughout the game. We explored the idea of using the accelerometers in our phones, but ultimately decided that wasn’t feasible and used the Adafruit accelerometer instead. These accelerometers use i2c communication, and came with some built in libraries for us to use. The most important aspects of the accelerometers were setting up and wiring them, determining proper thresholds to indicate up/down and left/right movement, and integrating them into our game. Read more about how we designed and tested these aspects by clicking on the images below.

Game_screen.py is the main file that is run when playing the game. The process for creating the game screen involved similar aspects to the control_two_collide.py from Lab 2 and rolling_control.py from Lab 3. The text on the screen is stored in a dictionary of position and text which can be blitted on the screen. The buttons on the screen that cause actions, such as starting the game or choosing a song, use the MOUSEBUTTONUP event with coordinates within a designated range to register a change. The screen is set up and then checked for actions by the user in a master while loop that is exited after clicking a "Quit" button. We made liberal use of functions to help with code organization and reuse. We also created a constants.py file which held values such as positions of text and color to get replace magic numbers and make customization changes easier. Since we had created a GitHub library, we could write and test the code remotely from the RPis on our laptops until we had to integrate the physical controllers. Click the images to learn more about the process for creating each screen.

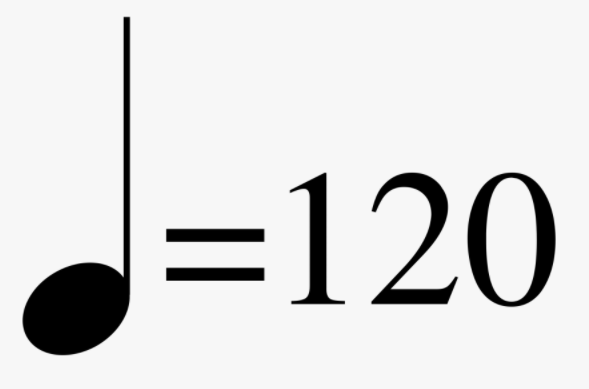

The song we chose is Senorita by Shawn Mendes and Camilla Cabello. To create the movements associated with parts of the music, we created a movement and songs class. We then used the songs class to create a song object that holds all the relevant information relating to a song for it to be playable by the game screen. Click the images to learn more about the process for creating songs and testing.

We chose a great project because it was very interesting and rewarding to both partners, and we had a lot of fun along the way. Ultimately, we were very successful in creating our Music Motion game, and in all three aspects of our project: the accelerometers, the game screen, and the music.

We did a great job dividing up the work. By having Emily focus on the game screen and Sabrina focus on the accelerometers, we were able to successfully finish our prototype in time. We also did a good job sharing our progress with each other, which was important as we both needed a complete understanding of all Music Motion aspects to successfully integrate the components together.

We decided that phone accelerometers and music analysis were not viable solutions for our Music Motion game. However, this didn’t stop us from achieving our goals. We were able to get a working game screen that displays current and upcoming dance moves, we had correct accelerometer input and scoring, and we have a song with dance moves that are synched to the music playing.

The results were successful. At the end, we had a playable version of Senorita where the user's movements contributed to the score.

We met the goals as outlined in our initial proposal, but were unable to begin the stretch goals. We followed the timeline of when to complete components and stayed on track of that for the project.

Some options for expanding upon the game could include

| Item | Number | Cost Per Unit | Total Cost |

|---|---|---|---|

| Breadboard Set | 2 | $5.99 | $11.98 |

| Accelerometers | 4 | $11.95 + express shipping | $71.95 |