ECE 5725 Project: Under Cover System

Created by Yixie Chen (yc2636), Zhuxian Mei (zm235)

"A protection for your stores"

Demonstration Video

Objective

During the COVID-19 pandemic, it is essential for everyone to follow the prevention guidelines to stop the spread of this horrible virus. Social distancing and facemask wearing are extremely significant, especially for the service industry.

However, for convenience stores, it is difficult for owners to keep track of their customers and ensure that every person has a proper mask on. It either requires a lot of human resources or creates unsafe conditions. It can also cost a lot financial wise. Therefore, in order to solve this dilemma and create a safe environment for our community, we introduce you to our project called “Under Cover”.

Introduction:

-

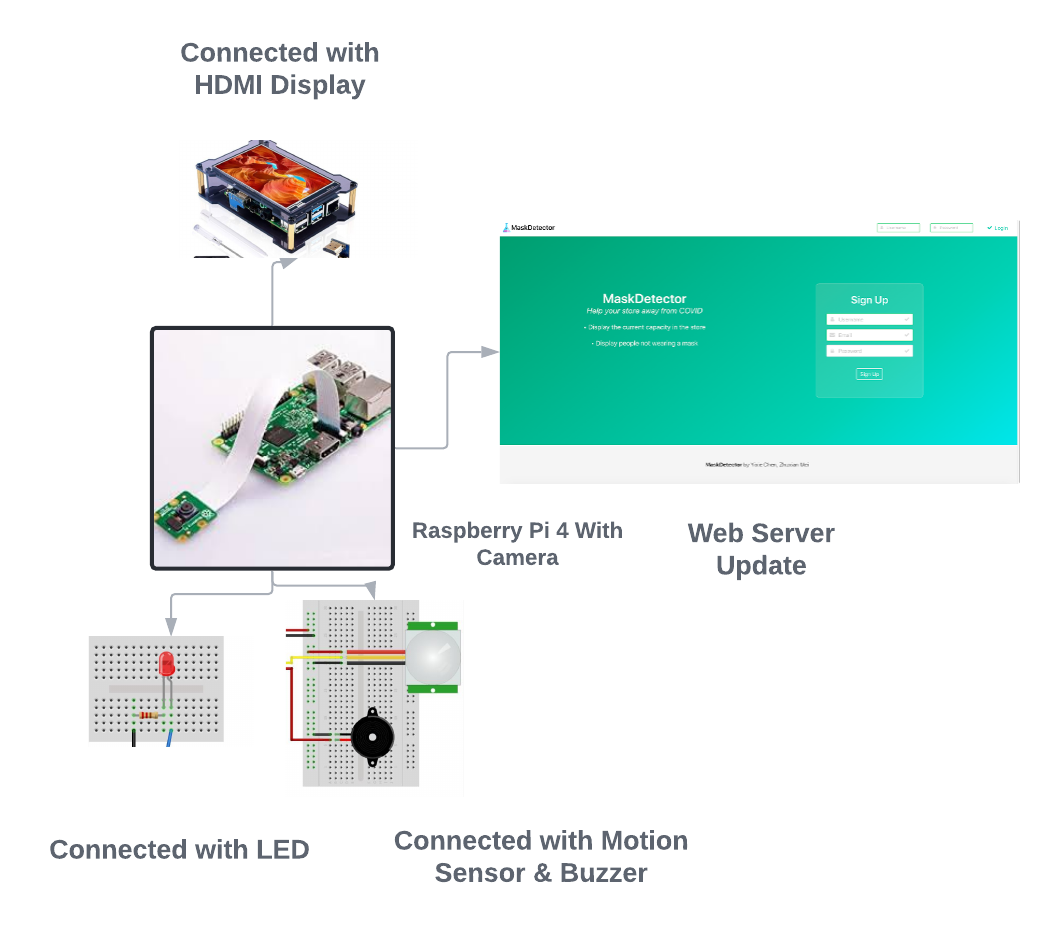

“Under Cover” is an embedded system project designed to identify whether customers are following the COVID-19 prevention requirements for local store owners. It is also capable of recording the current capacity of the store. “Under Cover” contains a raspberry pi 4 with HDMI display, a camera, Led lights and a buzzer, and a motion sensor.

-

Using image processing and machine learning techniques, the raspberry pi can determine whether the customer is wearing a mask or not. When the customer enters from the front door, the Under Cover system would capture the image of the person and provide an assertion result. If the customer does not meet the requirements, a picture of the customer will be taken by the camera and sent to a specific website that we build to inform the owner. The buzzer and the red light will also be triggered as an alert. On the other hand, if he/she has a mask, the customer is permitted to enter the store. The current capacity will be updated to the website. If it reaches the maximum capacity, the store owner will be informed.

-

Under Cover is an outstanding system that is capable of protecting our local community. It is cheap, fast and easy-installed. Start protecting your store today by using Under Cover!

Design

There are three major parts in our design including Mask detecting software design, website implementation, and finally physical construction. In order to build such a project, we have successfully accomplished following tasks:

1)Implemented code for OpenCV and Tensor flow to build up a machine learning model

2)Train models with our own pictures separated by wearing masks and not wearing masks

3)Setted up Camera & video stream display

4)Website update

5)Implemented Leds, buzzer and motion detector for keeping track of the capacities

Image Processing Design

Part I: Set up

The first step for our project is to set up the environment for our raspberry pi. In our project, we use OpenCV, Tensorfow and imutils. We follow this tutorial to compile the full build for OpenCV, which requires three hours to compile. Here are some essential packages we downloaded to compile the OpenCV.

Part II: Model Training

During this part, we first create our training set. We each record 800 photos of our own. We separate them into two categories. We take photos with our mask on in the “Mask On” set and without a mask in the “Mask Off” set. Following this tutorial , we are able to build our own DNN network to train our model. The training model has following structure:

Detection Model

# load the MobileNetV2 network, ensuring the head FC layer sets are

# left off

baseModel = MobileNetV2(weights="imagenet", include_top=False,

input_tensor=Input(shape=(224, 224, 3)))

# construct the head of the model that will be placed on top of the

# the base model

headModel = baseModel.output

headModel = AveragePooling2D(pool_size=(7, 7))(headModel)

headModel = Flatten(name="flatten")(headModel)

headModel = Dense(128, activation="relu")(headModel)

#headModel = Dense(36, activation = "relu")(headModel)

headModel = Dropout(0.5)(headModel)

headModel = Dense(2, activation="softmax")(headModel)

# place the head FC model on top of the base model (this will become

# the actual model we will train)

model = Model(inputs=baseModel.input, outputs=headModel)

Part III Model modification and Implementation

After training our model, we implement a real-time video stream using OpenCV. Using faceNet, we are able to obtain facial recognition for input images. We loop through the video stream and grab a frame from the stream and resize it. We then apply our face mask classifier that we built previously to determine whether the person is wearing a mask or not.

Additionally, we also implement a capacity counter to record the current capacity of the store. Everytime the customer comes in, the counter will be incremented by one. Using a motion sensor at the exit, we also develop a way to decrement the counter when the customer leaves the store. If the maximum capacity is reached, the video stream will output a notification to indicate that the maximum capacity is reached and no one can enter the store unless someone exits. The current capacity will also be uploaded to the website.

Website Design

Part I: Requirements

At first, our website should be able to show the real time status of the store inside, including current customers capacity and people violating mask code. Besides, we add the new user signup and user login/logout feature into the system, allowing more extensible features. For example, we can let this website serve for multiple users(raspberry pi). If we add a login process in the mask detect program running on the raspberry pi in the future with the login feature of the website, the website can show the user's corresponding status.

Part II: Tech stack used

We choose Flask as our web framework to deal with the routing and request based on our application scale and scenerial. The reason is Flask is a micro framework written in Python, which is pretty easy to dip our toes in and powerful enough to handle our use case. Besides, it provides support on Jinja template engine. So we can use Jinja to speed up html template development since its template inheritance. It allows us to repeatedly use certain html file as module. For example, our home page html template takes use of navbar template file. Since Jinja powerful feature, we can include for loop in html to put all the images in the directory. The code link is here.

Specfically, in order to keep track the customers number and people's pictures who not wearing the mask, we need to establish the way to communicate the web server and our detect python process. We have 2 way implemented here. For picture display, everytime detection process finds anyone not wearing mask, it will write down the picture into the web server static directory. And server will walk through the corresponding directory to display all the pictures. The other way is to use RESTful protocol. The example is shown below. Everytime the server gets the following postfix GET requests, it will increment or decrement the customer capacity.

RESTful way to increment/decrement customer capacity

@app.route("/incr", methods=["GET"])

def incr():

customers.customer.capacity += 1

return redirect(url_for('login'))

@app.route("/decr", methods=["GET"])

def decr():

customers.customer.capacity -= 1

return redirect(url_for('login'))

Physical Implementation

Part I Camera

We use a Raspberry Pi camera to capture the customer image. It is connected to the slot between the HDMI and Ethernet ports. We first update our pi using sudo apt-get update and then use sudo raspi-config to enable the camera features. After initializing the camera, we use it to take our training photos with command raspistill -o image.jpg.

Part II: LED and Buzzer

Using the breadboard, we integrate a LED light and a buzzer into the system. When the system detects that someone who does not meet the requirements is trying to enter, it will trigger the LED lights and the buzzer for 5 seconds to inform the store owner.

Part III: HDMI Display

To provide a better visual effects, we combine the raspberry pi with a 800*480 HDMI Monitor to display the image captured by the raspberry pi. The hdmi monitor uses 26 GPIO pins as power input and is also connected with hdmi cable. The photo of hdmi cable and pins used for screen are shown below.

Testing

During the compile stage, we experienced a lot of different issues regarding package indepencies as well as compilation failure. We adjusted the raspbian version to solve these problems. The process was very time consuming and required a lot of adjustments.

For the training model, we tested the accuracy of our model by running the program with mask on and mask off to record the difference between the prediction and the truth value. We adjusted the DNN network and photos input to improve the accuracy. We also modified the counter implementations for better performance.

Result & Conclusion

We successfully achieved our main goal of the project. “Under Cover” system is capable of: 1) Detect whether the person entering the store is wearing a mask or not using machine learning.

2) The system can respond correspondingly with the result. If the person is not wearing a mask, the LED and the buzzer will be triggered.

3) It also records the current capacity in the stores with modification when there is a customer coming in/out of the store.

4) A website is created to record information of the current capacity status as well as identify the person who enters the store without a mask

In conlusion, our project has all the major functitonalities as we designed before. However, we discover that the system still has some minor problems including overheating, image lagging, and motion delay. Those issues can be addressed adding more components.

Overall, we believe our project is capable of helping the local store owners to prevent Covid-19 and maintance a safe environment at a very resonable price

Future Work

There are several things we can improve in this project in the future:

Firstly, for the training model, we can include more pictures to improve the accuracy of the model. We can include different types of masks and different races. By doing so, we can further improve our system performance.

Secondly, we can solder all the wires for the Led light and the buzzer to save space and prevent short circuit. The motion sensor can also be improved by soldering to prevent physical damage to the sensor

Finally, we can scale our website system like multiple detection raspberry pi module with one central website server. In this way, we need to modify our image upload process to let each raspberry pi send the images to the server. In this way, owners of stores can monitor their own store's status in real time.

Work Distribution

Project group picture

Zhuxian Mei

zm235@cornell.edu

Designed the website implementations & machine learning process.

Yixie Chen

yc2636@cornell.edu

Designed the physical implementation & machine learning process.

Parts List

- Raspberry Pi Provided

- Raspberry Pi Camera V2 Provided

- HDMI Touch Screen $28.0

- Wires & Buzzer- $13.0

Total: $41.0

References

PiCamera DocumentOpenCV Installation

Nerual-Network Guide

Pigpio Library

Website Prototype