Delivery Robot

John Macdonald (jm2424) and Jingyang Liu (jl3449)

Demonstration Video

Introduction

We designed, programmed, and built a delivery robot which is remotely operated though a web interface. This could be useful, for example, in a hotel where it may be desirable for an attendant at the front desk to delivery an item to a guest, without leaving such that other guests cannot be served.

Project Objectives

Primary Objective: Teleoperated Delivery

- Construct a two-wheeled mobile robot which can traverse an indoor setting

- Provide a touchscreen mounted at a comfortable height so that a user can request a delivery, or ask the robot to return to its home location after making a delivery

- Implement an interface for a user to remotely operate the robot wirelessly

- Implement an interface for a user to view a real-time video feed from a front-facing robot camera

Secondary Objective: Autonomous Delivery

- Implement computer vision software to recognize black and white barcode markers to indicate the locations of rooms

- Implement navigation software to autonomously navigate to rooms using visual barcodes as landmarks

Design and Implementation

Structure

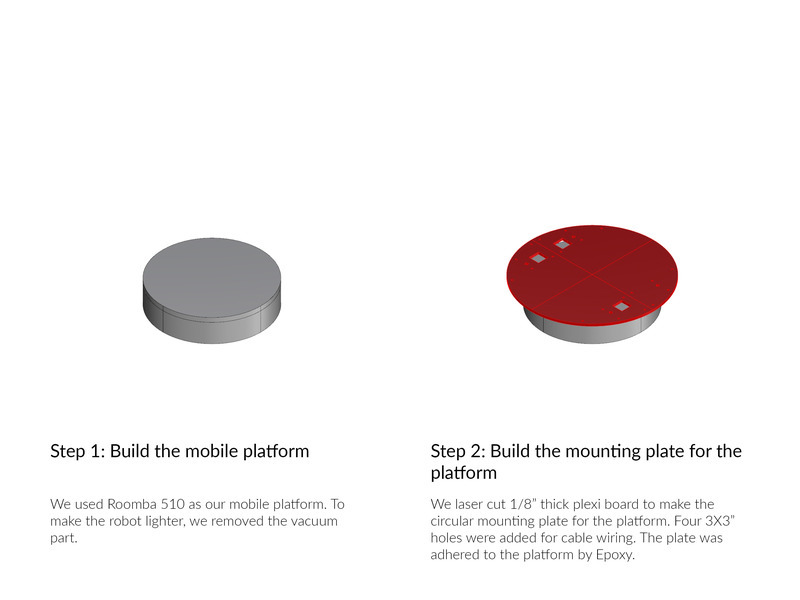

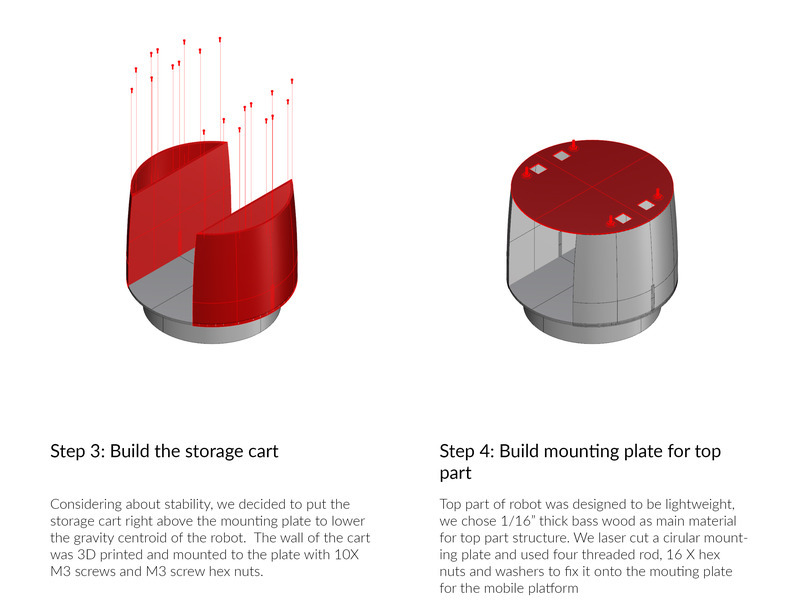

Overview

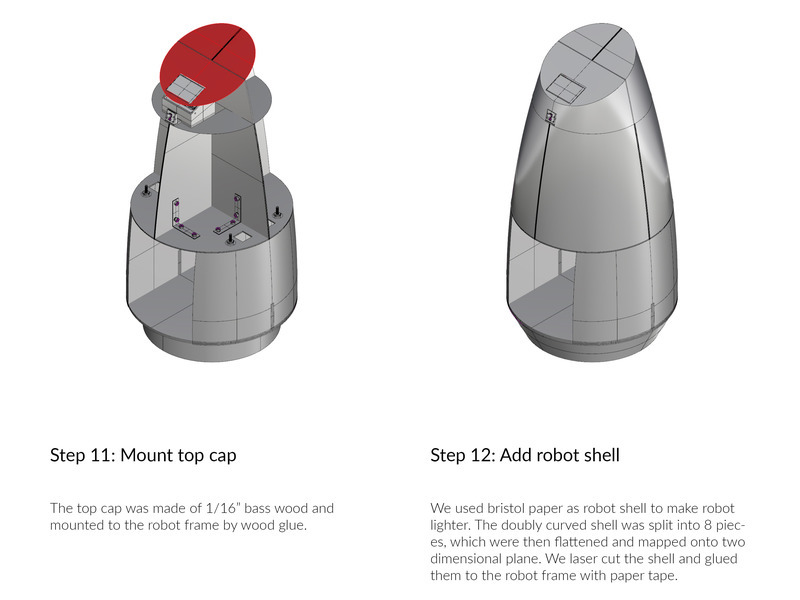

We used an iRobot Roomba 510 as a mobile base for the robot. On top of the base, we built a structure consisting a laser-cut wooden frame and 3D printed panels refinfored by steel rods. Paper and paper tape was used to create a smooth outer surface. A cavity in the lower part of the robot was added to hold the item to be delivered, while maintaining a low robot center of gravity.

Industrial Design

Robot shapes considered for the final design.

Examples of robots and devices which inspired our design.

Many robots face skeptisicm from people, who see them as cold and heartless machines. We wanted our robot to have a sleek, friendly, and inviting exterior which would make a human feel comfortable interacting with it. This is very important for a robot in a hospitality setting, for example. We took inspiration from several robots and devices, including Jibo, Kuri, Relay, the Xperia Agent concept, and Google Home. We decided on an all, white tapered design with a angled top in order to face the touchscreen towards the user. In order to keep the center of gravity low, we included a lower compartment for items.

Electronics

Diagram of the electronics architecture.

The class-provided Raspberry Pi 3 was used as our main computer. This enabled us to meet our requirement of remote wireless commuinication, as it includes an integrated WiFi radio. This also enabled an easy route to meet the touchscreen goal, as we were also provided with the PiTFT touchscreen. This also enabled us to use the Raspberry Pi Camera for our remote user video feed and visual marker recognition for autonomous navigation.

We desired a robot base with wheel encoders in order to enable wheel odometry for remote user assitance features as well as for autonomous navigation. We evaluated three main routes, (1) Adding a radial black-and-white pattern on the inside of our existing robot servo wheels and reading it with an infared optical sensor (2) Purchasing DC motors and integrating encoders with the motors (3) Controlling an old iRobot Roomba which contains drive wheels with integrated wheel encoders, which one team member already had on hand. Option (1) has the drawback of being sensitive to ambient lighting conditions, while option (2) required purchasing and integrating several hardware elemements which would add cost to our BOM and require time to mount and integrate electrically. The Roomba, on the other hand, had reliable integrated encoders with a precision of 508 ticks per revolution. Due to being a used and broken 10 year old model which refused to clean, we could price it very cheaply. Finally, communication with the Roomba only required a USB to TTL serial converter, and could be done easily via the public iRobot Create Open API.

Finally, an Arduino was used to read angular velocity from a gyroscope using I2C, and this information was sent to the Raspberry Pi over USB. The gyroscope was integrated to improve the robot angle estimate after testing with the angle estimate from the wheel odometery proved inaccurate.

Software

Overview

The software used the AUV software stack as a framework, since one team member had previously been a member of the Cornell Autonomous Underwater Vehicle team. This framework in particular provided a library for lightweight shared memory (SHM) between processes using the POSIX shared memory API and pthreads for mutexes and condition variables. This was used to link processes in a multi-process architecture. A multi-process architecture was decided upon to (1) utilize the four cores of the RPi and (2) to manage the different update rates of the hardware. The Roomba, gyroscope, camera, and touchscreen all had different rates of input and output which would be difficult to handle properly in one main loop. Instead, independent processes managed each of the tasks associated with these devices independently and communicated via SHM.

Create Daemon

Notable Inputs

- motor_desires

- int left_wheel

- int right_wheel

Notable Outputs

- encoders

- double timestamp

- int counts_left

- int counts_right

The Create daemon, or created, uses the pycreate2 Python library to read sensor data from the Roomba over USB, and write it to the corresponding SHM groups, as well as read motor velocity commands from the motor_desires SHM group and send them to the Roomba. The pycreate2 library provides Python functions to send the command packets to the Roomba, and read sensor packets. The packet definitions are provided by iRobot in the Create 2 Open Interface.

Additionally, the daemon disables Roomba fail-safe functionality via API commands. The Roomba's bumper is broken and always reads "pressed," which means that the Roomba will ignore commands to drive forward if fail-safes are enabled. The daemon also queries the Roomba for basic system state. For example, it reads the remaining battery charge in mAh and uses this to compute the remaining battery percent.

Locator

Notable Inputs

- encoders

- int counts_left

- int counts_right

- gyro_integrated

- double theta

Notable Outputs

- locator

- double x

- double y

- double theta

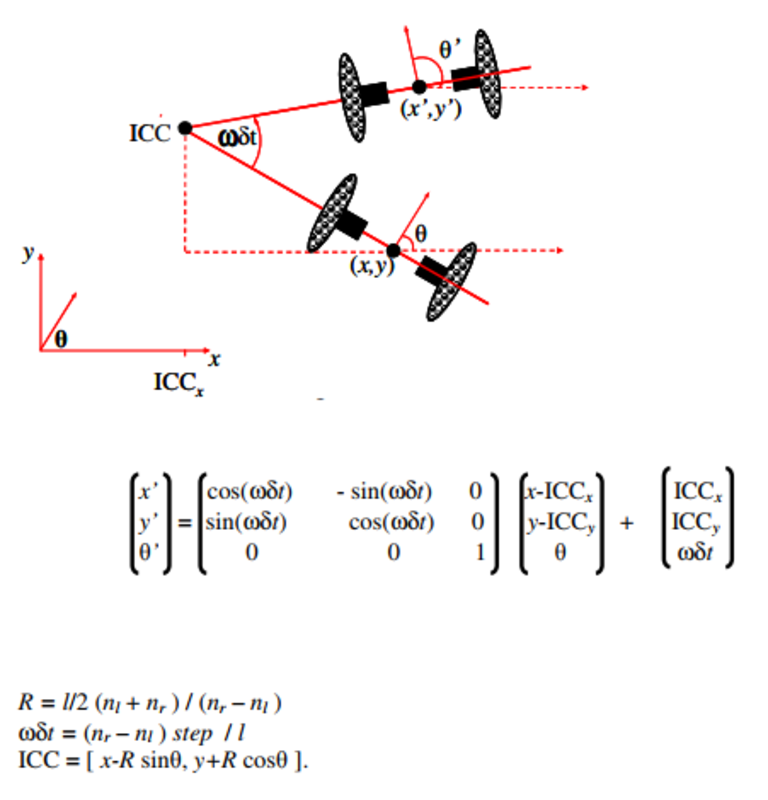

The locator computes the robot position by integrating wheel encoder counts. This is done by assuming velocity is constant over every locator timestep. This assumption results in the robot taking a circular arc path over the timestep. The position delta is then computed by modeling the movement as a rotation about the center of a coordinate system located at the center of rotation of the robot, and constructing an according rotation matrix. The angle delta is calcuated from the integrated gyro reading. NumPy is used for matrix multiplication. A more thorough description of the mathematics can be found here. The crucial equations and a diagram are replicated below. The following constant defintions were used: l is the distance between the two wheels, about 23 centimeters for a Roomba 510. step is the distance that a Roomba wheel moves for each encoder tick, which is about 0.4 millimeters.

`

Kinematics equations for robot position estimation. (Source)

Tracker GUI

Notable Inputs

- locator

- double x

- double y

- double theta

The tracker provides a convenient way to visualize the robot's position estimate. It is adapted from the Position Tracker in the AUV software stack, and uses Cairo to draw a visualization of the robot position.

Controller

Notable Inputs

- control_desires

- double linear_vel

- double theta

- locator

- double theta

Notable Outputs

- motor_desires

- int left_wheel

- int right_wheel

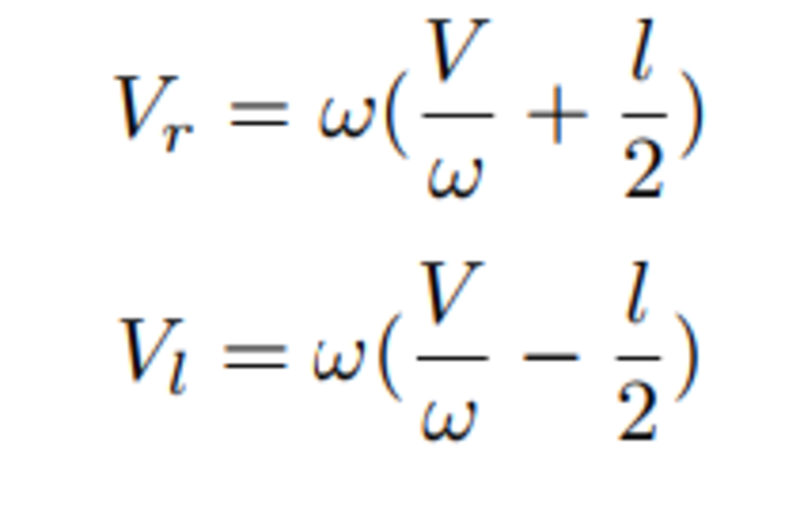

The controller reads the desired control input, contained in the control_desires.linear_vel and control_desires.theta SHM variables, and wrote the corresponding wheel velocities motor_desires.wheel_left and motor_desires.wheel right to SHM. A PID controller which takes the angle locator.theta as an input and outputs a desires angular velocity controls the robot angle. Then, given the desired linear and angular velocities, the corresponding wheel velocities are calcuated according to the following equations:

Raspberry Pi Camera Daemon

Notable Outputs

- forward camera buffer

The camera daemon reads images from the Raspberry Pi camera, flips them 180 degrees to compensate for the physical mounting, and writes them to the AUV vision system framework. This is accomplished using the picamera Python library. The AUV vision system framework stores camera images in a triple buffered shared memory structure, and allows reading from and writing to these buffers.

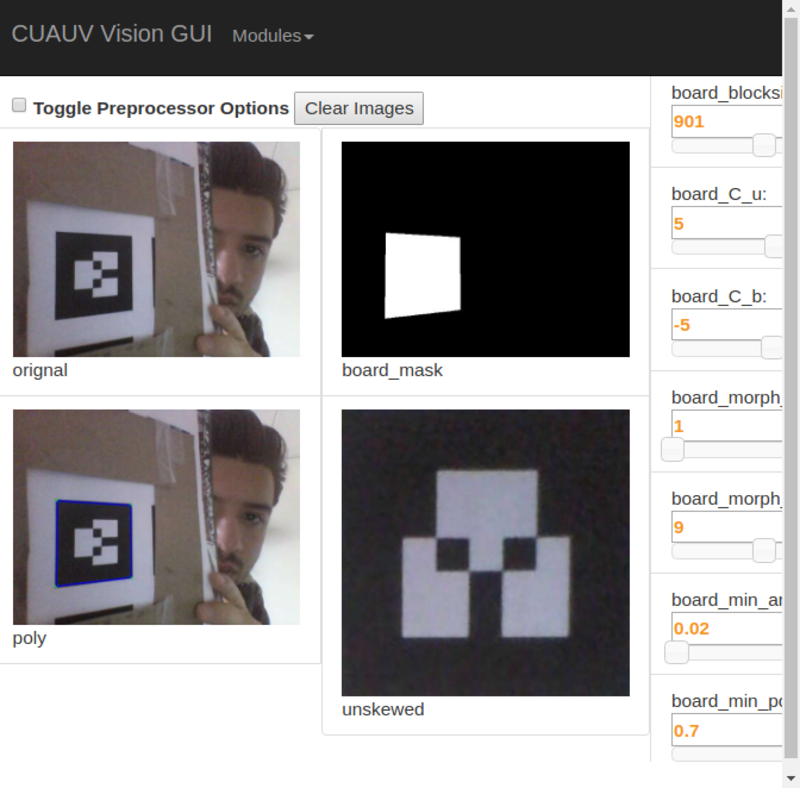

Vision GUI

The Vision GUI is adapted from the AUV software stack. It is a webserver implemented using Flask. It reads camera images from the vision system and sends the camera images to the web client via web sockets using the Socket.IO library. It is used by the remote user to view a video feed coming from the the robot while controlling it.

Marker Vision Module

Notable Inputs

- forward camera buffer

Notable Outputs

- marker_results

- int center_x

- int center_y

The marker vision module processes camera images and detects if the image contains a marker. If it does, it writes information about the marker such as its position in the camera frame to the marker_results SHM group. The vision module recognizes black parts of the image using adaptive thresholding, that is, it computes a threshold relative to the average image intensity. It then uses the Douglas-Peucker algorithm to identify black quadrilaterals. OpenCV is used to ease implementation of computer vision algorithms.

Touchsreen UI

Design

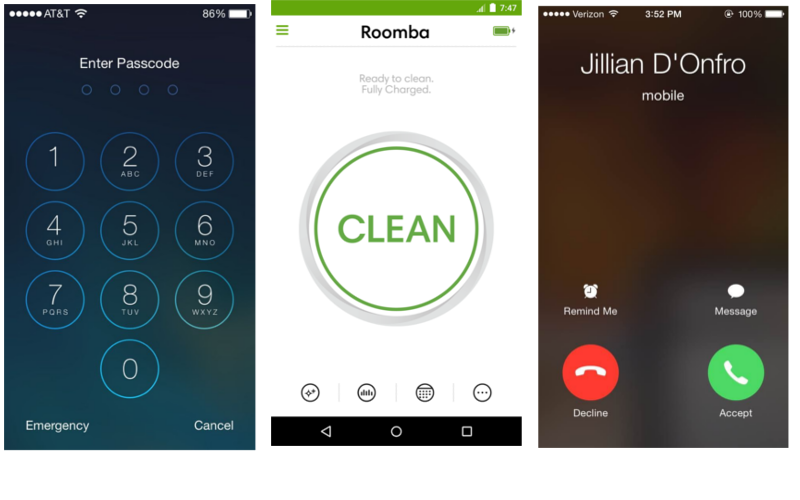

Sources of inspiration for the touchscreen UI.

Currently, many robots are complex, with complex user interfaces and theories of operation. We, on the other hand, wanted to create a friendly robot which could be used by anyone. We therefore wanted the UI to be very intuitive, so that the robot could require no training to operate. We took inspiration from several successful touchscreen interfaces. Primarily, we looked at the iOS mobile operating system, known to be very simple and easy-to-use, and the mobile app for the iRobot Roomba, a successful consumer robot which is easy to use. We especially liked the large "Clean" button in the Roomba interface, which makes it clear that the primary function of the robot is to clean, and requesting the robot to clean requires only pressing the button.

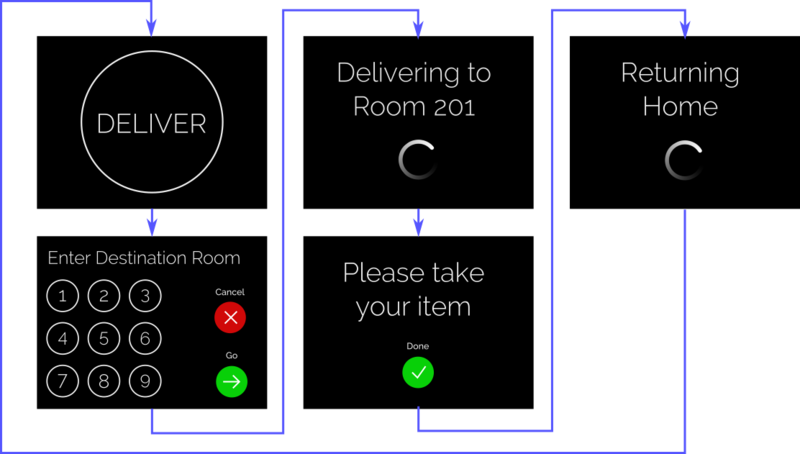

Implementation

Initial mockup of the UI over the course of a delivery with arrows to show order of the prompts

Notable Inputs

- delivery_state

- int state

Notable Outputs

- delivery_state

- int state

- int room_num

We used pygame to implement the touchsreen UI. We were familiar with pygame, having used it in the labs, and were confidient that it would integrate well with the touchscreen. The UI is implemented as a state machine whose current state is read from and written to a SHM group, delivery_state. This allows the remote user to know when a delivery is requested by reading from SHM, and instructing alerting the user of the delivery arrival by writing to SHM. Graphics for the UI were drawn using the Inkscape vector graphics program. The thin Raleway font was chosen to evoke a sleek, yet friendly, appearance.

Arduino Daemon

Notable Outputs

- gyro

- int theta_rate

- double timestamp

The Arduino daemon reads serial messages containing the gyro reading and timestamp from the Arduino using the PySerial library and writes the corresponding values to SHM. The timestamp is recorded so that any latency between the gyro and shared memory on the RPi is taken into account, increasing the accuracy of the angle estimate.

Integrator

Notable Inputs

- gyro_settings

- int theta_rate_bias

- int theta_rate_idle_mag

- gyro

- int theta_rate

- double timestamp

Notable Outputs

- gyro_integrated

- double theta

The integrator integrates angular velocity from the gyro using a trapezoidal approximation to obtain an absolute angle. The integrator was implemented in order to enable a better absolute angle estimate, in order to enable autonomous navigation. This is needed as the absolute angle estimate from the wheel odometry drifts from the actual value after just a small number of turns. The input signal is offset by gyro_settings.theta_rate_bias, the average signal from the gyro when there is no movement. In addition, a threshold is set, gyro_settings.theta_rate_idle_mag, which is the maximum magnitude of gyro signal, realtive to the average idle signal, which has been observed. This prevents the angle estimate from drifting when the robot remains stationary.

Testing

We tested our robot by driving it using the Vision GUI in the hallway of the second floor of Philips. The largest problem we noticed was the lag between the robot and the laptop. Since we were initially using the Cornell public WiFi, RedRover, we thought this might be the bottleneck. We therefore decided to test with our own wireless router. This, unfortunately, did not improve the lag. We took further steps, including reducing the resolution and framerate of the images. This did little to fix the problem. Use of a higher performance webserver, or use of a different WiFi adapter on the robot might improve the lag. The video feed, however, is sufficent to control the robot, if the user is careful.

Result

Our final product had a sleek paper shell reinforced by a wooden skeleton and steel rods for extra stability. It also featured wireless video streaming and vehicle control. A sleek touchscreen interface enabled the user to interact with the robot. Overall, we are very happy with the result of our project. We made significant progress towards autonomous navigation, implementing a program to recognize visual markers and compute the robot position using wheel odometry. We also integrated a gyro and wrote a program to integrate gyro velocity to compute a more accurate angle estimate.

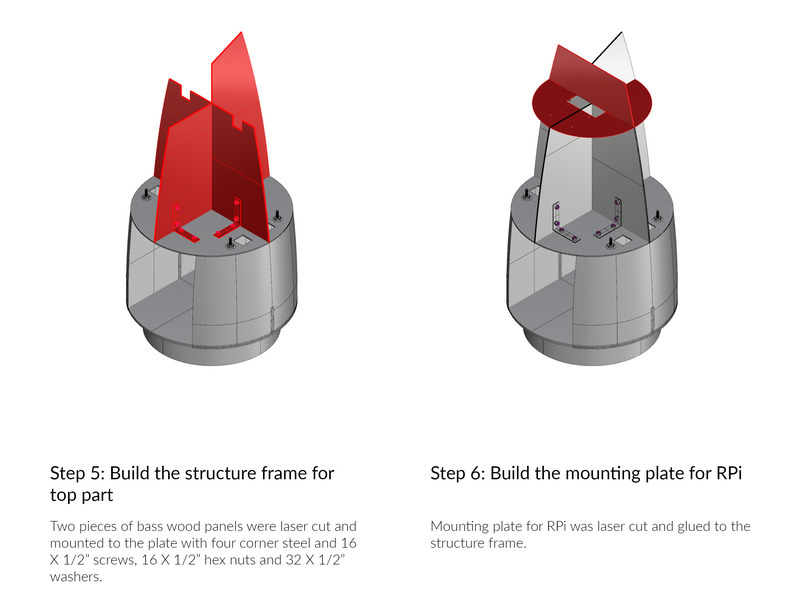

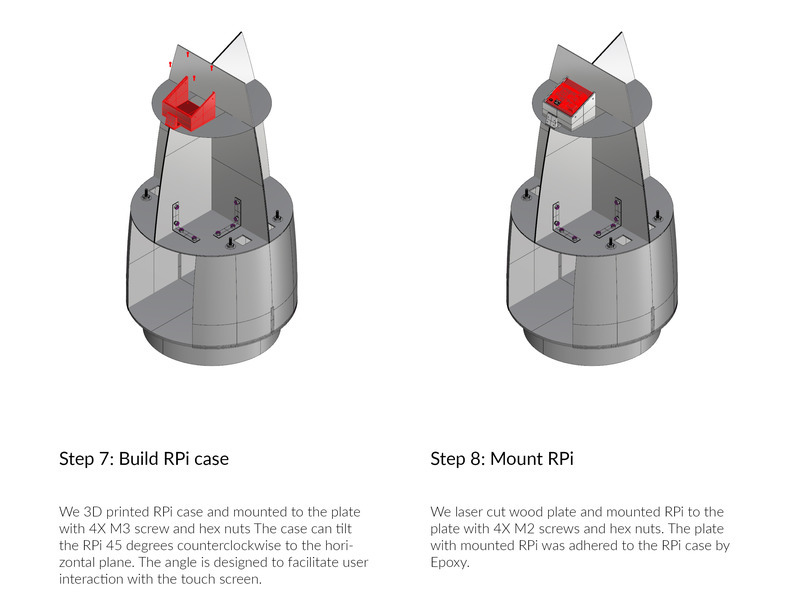

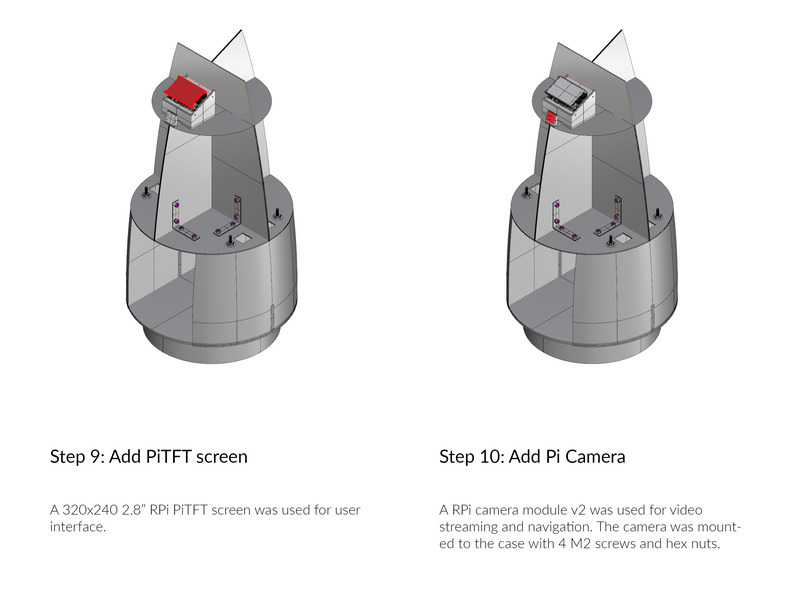

Assembly

Future Work

One team member, John plans to take the robot home and work on trying to make it navigate autonomously over the winter break. Jingyang is also looking to using the robot next semester for possible human-robot interaction reasearch. Overall, we are very pleased with the result of our project, and look forward to continuing our work on it in the future.

In terms of improving the teleoperation of the robot, several steps could be taken. The webserver should be evaluated for possible bottlenecks causing lag. In addition, different wireless adapters could be installed and evaluated for possible performance gains. A secondary downward-facing camera could be installed to make it easier to see when the robot is near an obstacle. In addition, wide-angle lenses could be installed on the cameras in order to get a better view of the surroundings.

Work Distribution

Project Group Picture

John Macdonald

jm2424@cornell.edu

Designed and implemented software.

Jingyang Liu

jl3449@cornell.edu

Designed and implemented hardware.

After graduating from Cornell University with a Master of Architecture in 2015, Jingyang became the first candidate for Master of Science in Matter Design Computation at Cornell University, program supported by both Autodesk and National Science Foundation (NSF). Jingyang is currently the senior research associate at the Sabin Design Lab at Cornell. His research focuses on design computation and robotic fabrication.

Parts List

Note that due to our prior interest in building things, we had several significant parts already on hand. These items were therefore not in new condition, and they were priced according to eBay listings for that reason.

- Raspberry Pi $0.00 (Provided)

- Pi TFT $0.00 (Provided)

- Cell Phone Battery $0.00 (Provided)

- Breadboard (Provided)

- Used iRobot Roomba 510, Non-Functional $45.00 (was given to team member by neighbor, priced according to this eBay listing)

- Removed Vacuum from Roomba -$29.95 (priced according to this eBay listing)

- Adafruit 9-DOF Accel/Mag/Gyro+Temp Breakout Board - LSM9DS1 - $14.95

- Used Arduino Uno $10.99 (team member had on hand, priced according to this eBay listing)

- Used Pi Camera v2 $17.99 (team member had on hand, priced according to this eBay listing)

- FTDI Serial TTL-232 USB Cable $17.95

- Mini-DIN Connector Cable for iRobot Create 2 $6.95

- 3D print filament 35g $10

- M2 screws X4 $1

- M2 hex nuts X4 $1

- M2 washer X4 $1

- M2 screws X14 $2

- M2 hex nuts X14 $2

- M2 washer X14 $2

- ¼” threaded rod 32” length x2 $10

- ¼” hex nuts x16 $2

- ¼” washers x16 $2

- ½” hex nuts x16 $2

- ½” screws x16 $2

- ½” washers x16 $2

- Plexi 12”X18” $10

- Bass wood panel $5

- Wires - Provided in lab

Total: $111.93

Code Repository

All of our software is hosted in a Bitbucket repository.