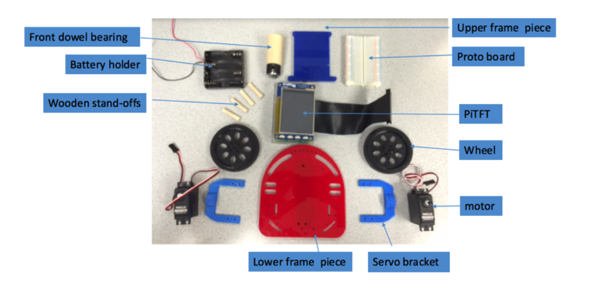

Hardware for the robot frame

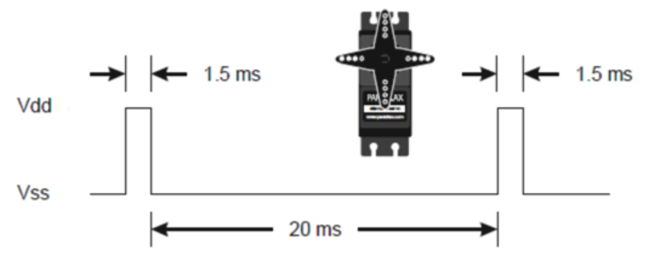

In the design, general-purpose input/outputs (GPIO) are utilized to drive a continuous rotation Parallax servo motor using Pulse Width Modulation (PWM) from the GPIO outputs. The servo motors are used as the foundation for the robot structure, built utilizing the raspberry pi to control its inputs and outputs. The servo motors capture the PWM signals to determine its speed and direction. The signal quickly switches between on and off. PWM was applied to control the servo position.

To initialize PWM, GPIO.PWM([pin], [frequency]) function is used. The frequency, in Hertz (Hz) is the number of times a pulse is generated per second. The intervals at which the pulses are counted are from the onset of one pulse to the onset to of the following pulse; from when the pulse starts to rise, to the next time it starts to rise. The entire “on”, “off”, and “in-between” times are included in a complete wave cycle.

𝐹𝑟𝑒𝑞𝑢𝑒𝑛𝑐𝑦 [𝐻𝑧] =1000/(1.5 [ms] + 20 [ms] )

𝐹𝑟𝑒𝑞𝑢𝑒𝑛𝑐𝑦 [𝐻𝑧 ]=1000/(21.5 [ms] )

𝐹𝑟𝑒𝑞𝑢𝑒𝑛𝑐𝑦 [𝐻𝑧 ]=46.51

Diagram for a centered servo

To adjust the value of the PWM output, the pwm.ChangeDutyCycle([duty cycle]) function is being used. Duty cycle is the percentage of time between pulses that the signal is “high” or “On”. Frequency and duty cycle are important parameters to PWM. To turn PWM on that pin off, the pwm.stop() command is used. It was ensured that the rpi.GPIO library was included in the code. GPIO pin 5 and 6 were selected and configured as an output. A LED was added onto each output pin. The LED protected the pin, preventing the excess drawing of current from the pin.

𝐷𝑢𝑡𝑦 𝐶𝑦𝑐𝑙𝑒 % =(1.5 )/(1.5 [ms] + 20 [ms] )*100

𝐷𝑢𝑡𝑦 𝐶𝑦𝑐𝑙𝑒 % =6.97

FJMbot is driven by internet connection; therefore, a webpage is designed as a telerobotic interface. The webpage is comprised of 3 options: Home. Motor Control, and Voice.

Home option is used to return to home page or to quit the control panel.

Motor Control displays the video of the remote location and includes a panel control to command the robot. The picamera is set up and the command line application, mjpg-streamer, is utilized to stream the video. The initial plan was to use socket to record to a network stream. The e control contains 9 buttons with unique functions. The function of set button on the left of the user is to command the robot to move forward, move backward, pivot left, pivot right, or stop. The button located on right is used to increase speed, decrease speed, quit or start the system.

Voice is used to send a voice note to the remote location. Due to time constraints, real time communication was not implemented. The message is recorded from the user device then submitted to where? Once the message has been submitted, it automatically plays on the raspberry pi and can be transmitted to speaker for the message to be heard. A better alternative to voice-streaming is to use a socket client-server.

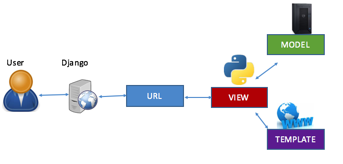

Django is driven by internet is used as the web framework to control the robot at a distance. This python web framework supports Model-View-Template (MTV) pattern. When the user clicks a button, access to Django is facilitated and requests url to view. When the demand is received, it is sent to the model to be stored and validated. From there, it is sent back to view to process the demand to the template. The template layer then renders html to control the motion of the robot.

Components of the MVT pattern

A robot which incorporated raspberry pi, picamera, speaker, USB microphone, and power bank was successfully built and controlled through an appropriate webpage. The picamera provide a real-time visual representation of a view of a location through the robot and the user was able to send voice notes to the remote location.

The initial plan was to attach an environmental information sensor to the robot. The sensor would sense and describe the remote location’s temperature, light, humidity, altitude, and pressure. Due to some issues with delayed order, the original plan was changed. Aside from the change in initial plan of adding a sensor to the robot, the robot provides the expected results overall.

FJMbot can be implemented by making a more robust platform and adding the environmental sensor. The settings of the camera could also be changed to self-adjust to become compatible with the luminosity of the remote location. The addition of vocal-command mode to the panel control allowing the user to command the robot without clicking any button would also be practical.

I would like to thank Joe Skovira and his TAs: Brendon Jackson, Steven (Dongze) Yue, Mei Yang, Arvind Kannan for their continuous help during the development of this project. Without their support, this project would not have been possible.